Llama 3

Open-source model cited as part of the model landscape.

Common Themes

Videos Mentioning Llama 3

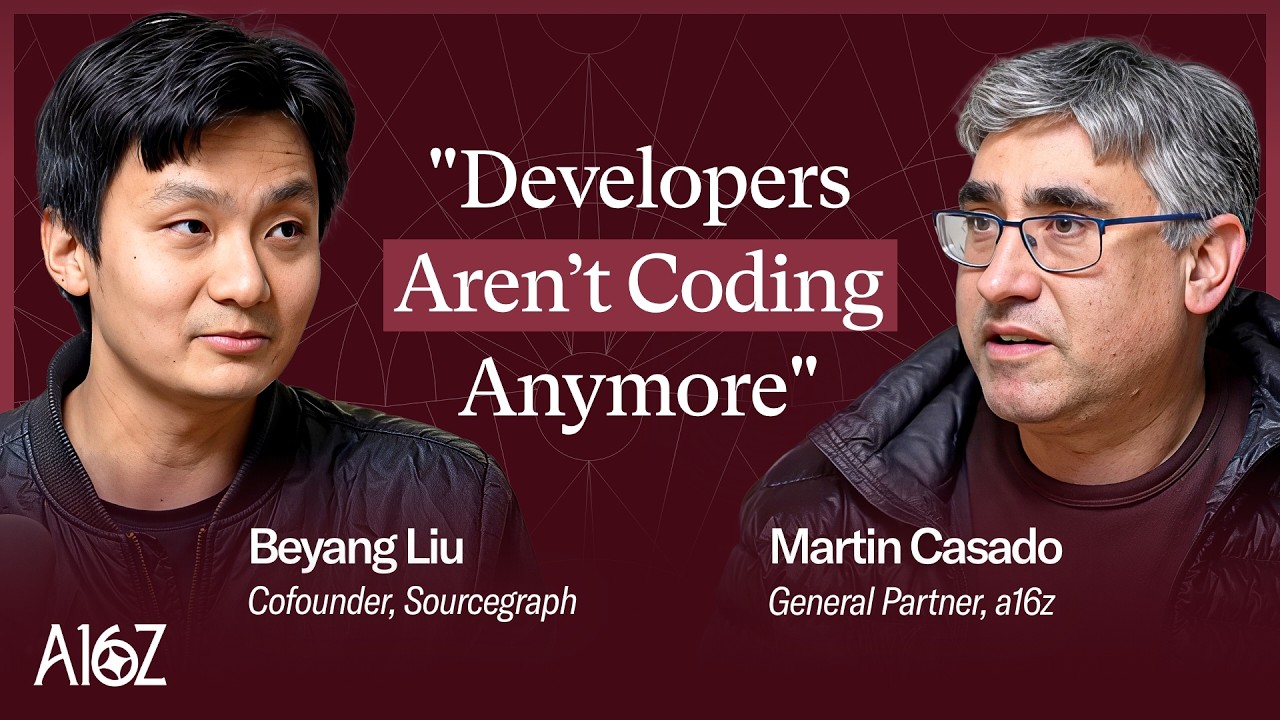

The Truth About Coding Agents: Why 90% of Your Time Is Now Code Review

a16z Deep Dives

Open-source model cited as part of the model landscape.

Segment Anything 2: Memory + Vision = Object Permanence — with Nikhila Ravi and Joseph Nelson

Latent Space

A large language model released by Meta, mentioned in the context of Meta's recent transparency in AI research and disclosures.

The Winds of AI Winter (Q2 Four Wars of the AI Stack Recap)

Latent Space

Meta's latest large language model, discussed for its capabilities, synthetic data usage, and potential for fine-tuning.

Training Llama 2, 3 & 4: The Path to Open Source AGI — with Thomas Scialom of Meta AI

Latent Space

Meta AI's latest large language model, aiming to be the best open-source model and compete with GPT-4, with parameter sizes up to 400B.

The Unreasonable Effectiveness of Reasoning Distillation: using DeepSeek R1 to beat OpenAI o1

Latent Space

A large language model from Meta AI, mentioned as a teacher model whose performance was surpassed by Bespoke Labs' miniature NB model on a specific task.

How to train a Million Context LLM — with Mark Huang of Gradient.ai

Latent Space

The open-source model chosen by Gradient for context extension due to its perceived capabilities and adaptability. Its initial 8,000 token context length was seen as too short.

2024 Year in Review: The Big Scaling Debate, the Four Wars of AI, Top Themes and the Rise of Agents

Latent Space

An open-source frontier model released in April, delivering on expectations with its 8B and 70B variants, making high-quality models accessible.

![Best of 2024: Synthetic Data / Smol Models, Loubna Ben Allal, HuggingFace [LS Live! @ NeurIPS 2024]](https://i.ytimg.com/vi/AjmdDy7Rzx0/maxresdefault.jpg)

Best of 2024: Synthetic Data / Smol Models, Loubna Ben Allal, HuggingFace [LS Live! @ NeurIPS 2024]

Latent Space

A model where Meta increased pre-training length to 15 trillion tokens, achieving better performance for the same size compared to LLaMA.

![Best of 2024: Open Models [LS LIVE! at NeurIPS 2024]](https://i.ytimg.com/vi/jX1nuoTs2WU/maxresdefault.jpg)

Best of 2024: Open Models [LS LIVE! at NeurIPS 2024]

Latent Space

Highlighted as a model released in 2024 that reaches frontier-level performance, indicating a narrowing gap with closed models.

The Ultimate Guide to Prompting - with Sander Schulhoff from LearnPrompting.org

Latent Space

A recent model release mentioned in conjunction with papers like Orca and Evol Instruct, relevant to training Chain of Thought into models.

![[Paper Club] 🍓 On Reasoning: Q-STaR and Friends!](https://i.ytimg.com/vi/Y5-FeaFOEFM/maxresdefault.jpg)

[Paper Club] 🍓 On Reasoning: Q-STaR and Friends!

Latent Space

Mentioned as a general model to which the fine-tuning method discussed could potentially be applied.

Is finetuning GPT4o worth it?

Latent Space

A language model whose paper discussed synthetic data generation for code, relevant to Cosign's training methodologies.

Jason Boehmig, CEO of Ironclad on Balancing Risk, Innovation, and AI Opportunity in the Legal Field

AssemblyAI

The Four Wars of the AI Stack - Dec 2023 Recap

Latent Space

Currently in training, expected to be a contender in the AI model space.

Beating GPT-4 with Open Source Models - with Michael Royzen of Phind

Latent Space

The next iteration of Meta's LLaMA models, on the horizon at the time of the discussion, expected to further advance open-source LLM capabilities.

The Shape of Compute (Chris Lattner of Modular)

Latent Space

A large language model mentioned as a benchmark for state-of-the-art performance in serving.

Sam Altman: Getting Fired (and Re-Hired) by OpenAI, Agents, AI Copyright issues

All-In Podcast

An open-source language model that has reportedly caught up to GPT-4 in many dimensions.

The Engineering Unlocks Behind DeepSeek | YC Decoded

Y Combinator

A model from Meta, compared to DeepSeek V3 for its parameter activation strategy. Llama 3 activates its full 405 billion parameters, while V3 uses a Mixture of Experts architecture.