Key Moments

Beating GPT-4 with Open Source Models - with Michael Royzen of Phind

Want to know something specific about what's covered?

We've already dissected every moment. Ask and we will deliver (with timestamps).

Key Moments

Phind's CEO discusses building an AI search engine for programmers, beating GPT-4 with open-source models, and the future of AI.

Key Insights

Phind's journey began with computer vision and evolved into an AI-powered search engine specifically for developers.

The company leverages open-source models and extensive data to compete with and, in some cases, surpass proprietary models like GPT-4.

Phind emphasizes a 'quality-first' approach, optimizing for accurate and complex problem-solving, especially in coding.

The development of Phind is driven by a belief in a future where AI handles implementation, freeing humans for problem-solving and idea generation.

Key milestones include the transition from proprietary models to fine-tuned open-source models and strategic partnerships, like with NVIDIA.

The future of Phind involves continued innovation in efficient model training, local model execution, and enhanced reasoning capabilities.

EARLY BEGINNINGS AND THE SPARK OF INNOVATION

Michael Royzen's entrepreneurial journey started in high school with SmartLens, a computer vision startup. Inspired by advancements in deep learning and on-device AI, he developed a model that could recognize a vast array of objects, even outperforming Google Lens at the time in terms of speed and local processing. This early success in computer vision laid the groundwork for his future ventures, demonstrating an early knack for identifying market needs and developing innovative solutions.

TRANSITION TO NLP AND THE BIRTH OF THE SEARCH ENGINE CONCEPT

Royzen's exploration into Natural Language Processing (NLP) began with building an enterprise invoice processing product. This led him to Hugging Face and models like BERT and Longformer, where he encountered the limitations of fixed context windows. A pivotal moment was a demo of a long-form question-answering system, which sparked the idea of using a large-scale index combined with an LLM for answering questions, a concept that would become the foundation for Phind.

DEVELOPING THE CORE TECHNOLOGY AND SCALING THE INDEX

The initial concept of Phind involved fine-tuning BART models on the Eli 5 dataset and building a massive search index from Common Crawl data. Despite the significant cost and technical challenges, Royzen successfully created a system capable of answering general knowledge questions. This early iteration, though shelved at times due to resource constraints, proved the viability of large-scale indexed retrieval powering LLM responses, a crucial step towards a comprehensive AI search engine.

THE RISE OF PHIND: FROM HELLO COGNITION TO A PROGRAMMER'S TOOL

The journey continued with the release of the T0 models, which provided a significant leap in reasoning capabilities. Royzen developed Phind's first functional system, connecting a fine-tuned T0 model to an Elasticsearch index, effectively creating an internet-scale RAG (Retrieval-Augmented Generation) system. This led to the founding of Phind, initially as Hello Cognition, with a pivot towards serving programmers, recognizing the specialized needs and complex problem-solving required in software development.

LEVERAGING GPT-4 AND THE SHIFT TO OPEN SOURCE MODELS

Phind's adoption of GPT-4 as its core reasoning engine proved to be a major turning point, significantly boosting its capabilities and user engagement. This period also saw strategic partnerships and attention from platforms like Hacker News. However, with the rise of powerful open-source models like LLaMA, Phind began a strategic shift towards fine-tuning and deploying these models. The hypothesis is that with vast amounts of high-quality data, open-source models can rival or even surpass proprietary counterparts for specific verticals.

THE PHIND MODEL: ACHIEVING STATE-OF-THE-ART PERFORMANCE

Phind's commitment to open-source led to the development of their own fine-tuned models, which have topped the BigCode Leaderboard, demonstrating superior performance, especially in non-Python languages. The company's approach involves extensive data training and a focus on practical, real-world evaluations, including using GPT-4 as an internal evaluator. This strategy allows Phind to offer models that are competitive with, and sometimes superior to, leading proprietary solutions, while maintaining control and customization.

PRODUCT PHILOSOPHY AND USER EXPERIENCE

Phind operates on a 'quality-first' principle, prioritizing accurate and sophisticated answers, particularly for technical users. This means sometimes sacrificing speed for complex queries. The platform offers web and VS Code integrations, aiming to unblock developers quickly. Features like conversational pair programming and the ability to prioritize messages are designed to enhance the user experience and manage long, complex interactions effectively.

THE FUTURE OF PHIND: INNOVATION AND ACCESSIBILITY

Looking ahead, Phind aims to further optimize model performance, explore longer context windows, and potentially enable efficient local model execution. The company is researching techniques for reinforcement learning for correctness and exploring new hardware capabilities like FP8. The overarching vision is to build a powerful, accessible technical reasoning engine that supports the entire software development lifecycle, from idea to execution, democratizing advanced AI capabilities.

THE PAUL GRAHAM AND RON CONWAY ENCOUNTERS

Royzen shared pivotal stories about meeting Paul Graham and Ron Conway. Graham's strategic advice, including suggesting the 'Phind' name, and his investment marked a significant validation. Conway's introduction to NVIDIA's CEO, Jensen Huang, was instrumental in securing crucial GPU resources, highlighting the importance of strategic networking and mentorship in the startup ecosystem.

ADVICE FOR ASPIRING ENTREPRENEURS AND DEVELOPERS

Royzen advises aspiring entrepreneurs to pursue ideas that genuinely obsess them, rather than starting a business out of boredom or general interest. He emphasizes that this deep-seated belief and passion are crucial for navigating the challenges of building a company. For developers, he suggests using tools like Phind as a technical research assistant to formalize assumptions and accelerate learning, reflecting his own self-taught approach to mastering complex technical domains.

Mentioned in This Episode

●Products

●Software & Apps

●Companies

●Organizations

●Studies Cited

●Concepts

●People Referenced

Phind: Getting Unblocked as a Programmer

Practical takeaways from this episode

Do This

Avoid This

Common Questions

Phind is an AI-powered search engine and assistant specifically designed for programmers. It helps developers get unblocked and find answers to technical questions quickly by providing relevant context and powerful reasoning capabilities.

Topics

Mentioned in this video

A feature within ChatGPT that allows users to upload files and use Python code to analyze and manipulate data, seen as a model for future Phind capabilities.

A model from Google that builds on T5 by incorporating instruction tuning, mentioned as a 2022 release that followed the T0 model's instruction tuning approach.

The next iteration of Meta's LLaMA models, on the horizon at the time of the discussion, expected to further advance open-source LLM capabilities.

A large multilingual language model developed by BigScience, mentioned as having potential but ultimately underperforming due to training data and fine-tuning issues.

A visual search engine developed by Google that allows users to search using images. SmartLens' early version reportedly performed better.

A denoising autoencoder for pretraining sequence-to-sequence models, used in an early Hugging Face demo for long-form question answering and later fine-tuned by Michael Royzen.

A leaderboard that ranks the performance of code generation models. Phind's models have achieved top positions on this leaderboard.

A family of large language models developed by Meta AI. LLaMA 2 is discussed as a foundation for Phind's own model development.

A popular AI chatbot developed by OpenAI, often used as a benchmark or alternative for LLM capabilities. Phind's audience sometimes switches from ChatGPT.

The initial name of the Phind product when it was first launched on Hacker News, before being rebranded.

A large language model from Meta AI specifically trained for coding tasks, mentioned as the base model for Phind's fine-tuned models.

Amazon Web Services, the cloud computing platform used by Michael Royzen to process and index Common Crawl data for his search engine.

Google's Search Generative Experience, a feature that brings generative AI to Google Search, predicted to commoditize simpler search queries.

A language for programmatically controlling LLM output, mentioned as a potential tool for restricting model behavior and addressing hallucinations.

Visual Studio Code, a popular source-code editor. Phind has a significant integration and extension for VS Code.

An earlier version of the GPT models fine-tuned to follow instructions, mentioned as a predecessor to advanced instruction-tuned models.

The featured snippets that Google displays at the top of search results, serving as an early analogy for LLM-powered summarization.

OpenAI's most advanced language model at the time of recording, powering Phind's core reasoning engine and leading to significant improvements and user growth.

A C++ implementation of Meta's LLaMA models, enabling efficient local execution on consumer hardware, mentioned as a key tool for local LLMs.

A browser-based IDE and collaborative platform for programming. Phind's relationship with Replit, both as a potential partner and competitor, is discussed.

A foundational transformer-based language model developed by Google, mentioned as an encoder model used by Michael Royzen before Longformer.

A large multitask language model released by BigScience, noted for its significant jump in reasoning ability and instruction tuning capabilities.

The second generation of Meta AI's LLaMA large language models, serving as the foundation for Phind's own fine-tuned models.

An experimental open-source application that depicts GPT-4 as an autonomous agent capable of achieving goals through task decomposition and execution.

A powerful open-source software library for machine learning and artificial intelligence, mentioned as a key development in the deep learning revolution.

A highly configurable text editor and IDE, mentioned as an example of an alternative development environment that users might prefer over VS Code.

A transformer-based language model designed to handle much longer sequences than standard BERT, which Michael Royzen utilized for its larger context window.

A framework for developing applications powered by language models. Their work on evaluating RAG systems and potential structured evaluation platforms is noted.

An AI-first code editor that aims to deeply integrate LLMs into the IDE experience, mentioned in comparison to Phind's approach.

A tool or feature mentioned by Michael Royzen for running side-by-side comparisons of LLM outputs, used to confirm Phind's model performance against GPT-4.

A software development company known for its IDEs (Integrated Development Environments) like PyCharm, mentioned as part of the diverse developer tool landscape.

A multinational technology company known for its graphics processing units (GPUs) and AI technologies. They provided crucial support and hardware to Phind.

A platform and community for machine learning, where Michael Royzen first encountered NLP models like BERT and Longformer for his invoice processing project.

The AI research lab behind models like GPT-3 and GPT-4. They were supportive of Phind during the early GPT-4 API access period.

A startup accelerator that provided funding and mentorship to Phind. The story of their acceptance and interactions with YC partners like Paul Graham is detailed.

A search engine and AI assistant specifically designed for programmers, aiming to provide accurate and contextually relevant answers to technical questions.

CEO of NVIDIA, who quickly responded to Ron Conway's introduction and helped Phind secure GPU resources.

Co-founder of Y Combinator, a prominent figure in the startup world. He provided naming advice and invested in Phind.

The CEO of Replit, mentioned as having discussions with Michael Royzen about integrating capabilities like code execution within Phind.

Co-founder of Y Combinator and wife of Paul Graham, recognized for her understanding of people and her significant role in YC.

A legendary angel investor in Silicon Valley who invested in Phind shortly after Paul Graham, facilitating crucial introductions, including to NVIDIA.

A massive, open repository of web crawl data, used by Michael Royzen to build an extensive search index for Elias 5-based models.

A floating-point format using 8 bits, implemented by NVIDIA in their Transformer Engine for potentially faster and more efficient deep learning model inference with minimal quality loss.

More from Latent Space

View all 225 summaries 41 min

41 min⚡️Making DeepSeek v4 outperform Opus 4.7 with Taste — @AhmadAwais , CommandCode.ai

78 min

78 minWhen AI Agents Run Businesses — Lukas Petersson and Axel Backlund of Andon Labs

94 min

94 minScaling Past Informal AI - Carina Hong, Axiom Math

42 min

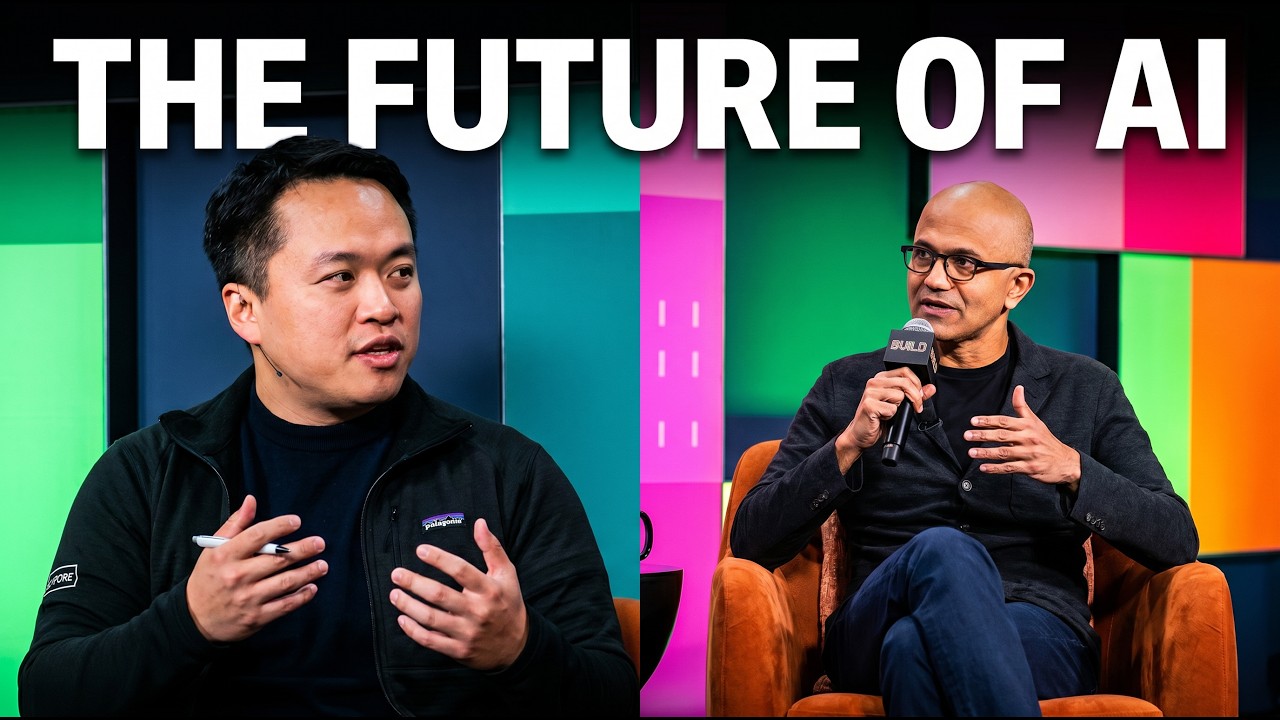

42 minSatya Nadella on AI: @NoPriorsPodcast x Latent Space Crossover Special at Microsoft Build 2026

Ask anything from this episode.

Save it, chat with it, and connect it to Claude or ChatGPT. Get cited answers from the actual content — and build your own knowledge base of every podcast and video you care about.

Get Started Free