NVLink

A high-speed interconnect technology from NVIDIA that enables GPUs to scale efficiently, allowing up to 576 GPUs to be trained as a coherent memory space with Blackwell.

Save the 5 videos on NVLink to your own pod.

Sign up free to keep building your knowledge base on NVLink as more episodes are added.

Videos Mentioning NVLink

Open Source AI is AI we can Trust — with Soumith Chintala of Meta AI

Latent Space

NVIDIA's high-bandwidth, low-latency interconnect technology, often highlighted for its capabilities.

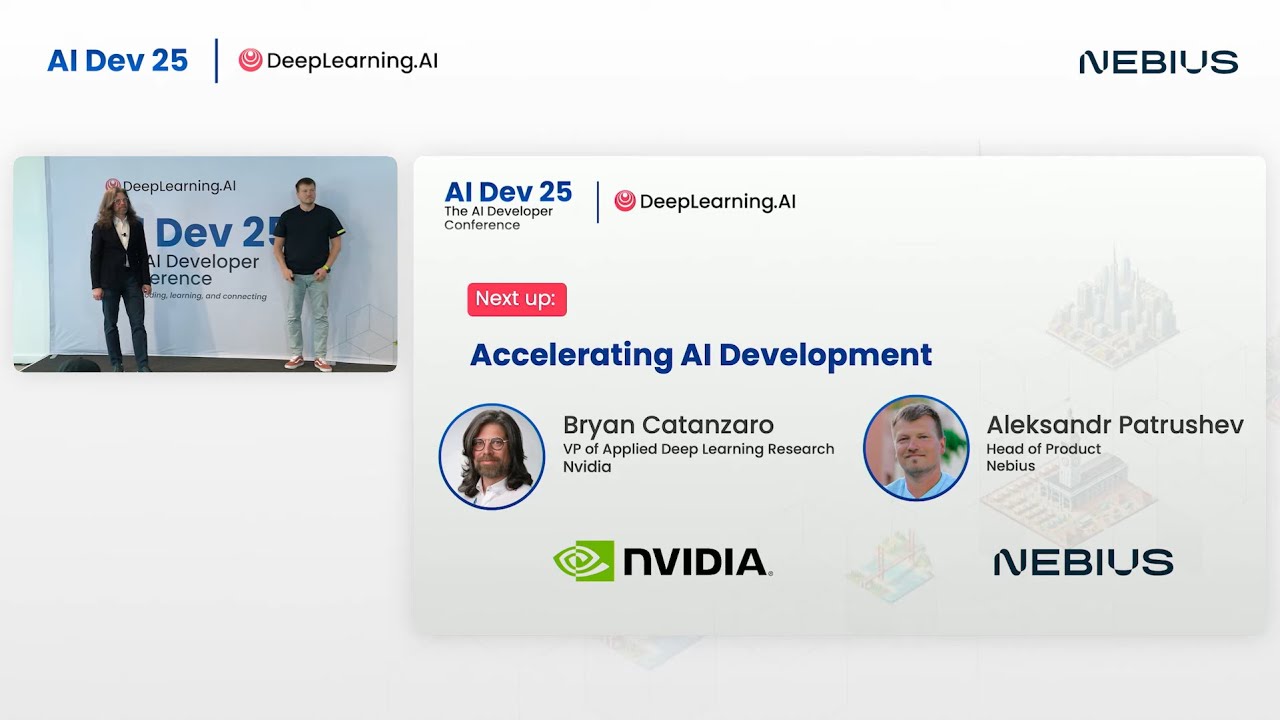

AI Dev 25 | Bryan Catanzaro & Aleksandr Patrushev: Accelerating AI Development

DeepLearningAI

A high-speed interconnect technology from NVIDIA that enables GPUs to scale efficiently, allowing up to 576 GPUs to be trained as a coherent memory space with Blackwell.

Stanford CS336 Language Modeling from Scratch | Spring 2026 | Lecture 8: Parallelism

Stanford Online

A high-speed interconnect, recommended for keeping expert and tensor parallelism within a single machine.

Stanford CS336 Language Modeling from Scratch | Spring 2026 | Lecture 7: Parallelism

Stanford Online

NVIDIA's high-speed interconnect technology for connecting GPUs within a server node, enabling high bandwidth communication.

Stanford CS153 Frontier Systems | The Discipline of Delivering Value per Gigawatt

Stanford Online

A high-speed interconnect technology developed by NVIDIA for scaling GPUs, discussed as a factor in system balance.