Key Moments

The new Claude 3.5 Sonnet, Computer Use, and Building SOTA Agents — with Erik Schluntz, Anthropic

Want to know something specific about what's covered?

We've already dissected every moment. Ask and we will deliver (with timestamps).

Key Moments

Claude 3.5 Sonnet achieves SOTA on SWE-Bench; discusses agent architecture, tooling, and computer use.

Key Insights

Claude 3.5 Sonnet, particularly its upgraded version, has set a new state-of-the-art on the SWE-Bench Verified benchmark for coding agents.

SWE-Bench focuses on real-world repository tasks, offering a more practical evaluation than isolated coding puzzles.

The architecture for SWE-Agent is minimal, allowing Claude significant control to decide its approach and tool usage until completion within a context window.

Tool design is crucial; iterating on tools with robust documentation, examples, and explicit instructions (like using absolute paths) makes them more foolproof for LLMs.

Computer use, analogous to a robot pressing elevator buttons instead of relying on APIs, significantly reduces integration friction for LLMs to interact with the world.

While LLMs excel at common sense tasks and diffusion models aid path planning in robotics, achieving high reliability and viable unit economics remains a major challenge.

ACHIEVING STATE-OF-THE-ART IN CODING AGENTS

The discussion highlights Anthropic's recent success with Claude 3.5 Sonnet, which has achieved state-of-the-art performance on the SWE-Bench Verified benchmark. This achievement is significant because SWE-Bench evaluates agents on real-world coding tasks within software repositories, providing a more practical assessment than traditional, isolated coding challenges. The release of the agent's architecture and prompts aims to empower developers to build upon Anthropic's findings and maximize the utility of their models.

UNDERSTANDING SWE-BENCH AND ITS VERIFICATION

SWE-Bench, a benchmark for evaluating coding agents, focuses on a subset of tasks from popular open-source Python repositories. It's distinguished by its requirement for agents to navigate and modify existing codebases, rather than solving isolated problems. SWE-Bench Verified further refines this by manually filtering tasks to remove ambiguities and impossibilities, ensuring that each task is theoretically solvable by a model. This verification process is a partnership with OpenAI, aiming for a more accurate representation of engineering challenges.

SWE-AGENT ARCHITECTURE AND TOOL USAGE

The SWE-Agent architecture is designed to be minimal, granting Claude substantial autonomy. The agent operates by receiving tools and then iteratively thinking, calling tools, and observing results until it determines the task is complete or it hits the context window limit. This approach contrasts with more rigid, pre-defined workflows, allowing the model, particularly Claude 3.5 Sonnet with its enhanced self-correction capabilities, to adapt its strategy dynamically. The primary downside identified is token expense, though future research might focus on pruning inefficient paths.

THE CRITICAL ROLE OF TOOL DESIGN AND ENGINEERING

Significant engineering effort was dedicated to tool design, with a focus on making them 'foolproof' for LLMs. For example, mandating absolute paths for file operations prevents confusion when the agent changes directories. Similarly, providing explicit instructions within tool descriptions, such as avoiding commands that don't return or advising against launching interactive programs like Vim, greatly improves reliability. This iterative process of testing tool usage and refining descriptions is presented as a key strategy for effective LLM agent development.

COMPUTER USE AS A LOW-FRICTION INTEGRATION TOOL

Computer use is framed as a revolutionary way for LLMs to interact with the digital world, analogous to a robot using its arm to press elevator buttons instead of waiting for complex API integrations. By providing the model with a browser and login credentials, it can immediately perform actions across various applications. This drastically reduces the engineering overhead typically required for API integrations, enabling rapid deployment of sophisticated tools for customer support and other applications, transforming how LLMs interface with existing software systems.

ROBOTICS: PROGRESS, HYPE, AND CHALLENGES

Despite burnout from its founder, the robotics field is seeing significant positive progress. General-purpose language models are bridging the gap in describing complex tasks, while diffusion models are revolutionizing path planning. However, considerable hype surrounds robotics, similar to the early days of self-driving cars. The primary hurdles remain achieving high reliability (moving from 99% to 99.9% success rates) and viable unit economics, as current hardware costs are substantial, making widespread adoption challenging compared to software-based AI agents.

THE FUTURE OF AGENTS AND TRUST

The critical factor for the future success of AI agents will be trust. As agents perform increasingly complex tasks over longer durations, users need to be confident in their outputs. This requires not only task completion but also auditable and explainable processes, allowing humans to understand how an agent arrived at its solution. This focus on trust and transparency is seen as paramount for agents to deliver true value beyond synthetic benchmarks.

Mentioned in This Episode

●Software & Apps

●Companies

●Concepts

●People Referenced

Common Questions

Sweet-Bench is a benchmark designed to test AI coding agents on real-world engineering tasks within existing code repositories. It's crucial because it evaluates agents on their ability to navigate and modify complex codebases, which is more representative of actual software development than isolated coding puzzles.

Topics

Mentioned in this video

Erik Schluntz mentions using Copilot for coding, which was a catalyst for his increased excitement about AI.

A graphing library where some Sweet-Bench tasks involve generating plots, and agents sometimes fail to visually inspect the output images.

Mentioned for its coding agent that creates a plan first and its comments on multi-agent systems.

Mentioned as one of the agent frameworks available, alongside LangGraph.

A containerization technology that can be used for sandboxing agent environments, though it can be slow or resource-intensive.

Anthropic's smallest and fastest model, which still performed surprisingly well on the Sweet-Bench benchmark.

A model released by Anthropic that has shown significant improvements in coding tasks and agentic capabilities.

Mentioned as an example of an agent framework that allows users to edit the plan as it goes along.

A paradigm (Think-Act-Observe) that underlies agent frameworks like Sweet-AEgent and influenced Anthropic's agent implementation.

Mentioned as one of the agent frameworks available, alongside CrewAI.

A startup working on agent sandboxing, mentioned as a friend of Anthropic.

Collaborated with Anthropic on creating Sweet-Bench Verified by manually reviewing and filtering tasks to ensure they are fully doable.

Used as a comparison point for the potential revenue and profitability of autonomous vehicle services like Waymo.

Mentioned as a previous employer of Erik Schluntz before joining Anthropic.

Erik Schluntz was the CTO and co-founder of this company, which built security and inspection robots for buildings and warehouses.

The company where Erik Schluntz currently works, focusing on AI safety and developing advanced models like Claude.

A company operating autonomous vehicles, discussed in the context of the challenges and economics of self-driving technology.

The company where Eric Jang currently works, mentioned in the context of AI and robotics crossover.

A technology from the past that provided a remote desktop experience, used as an analogy before the widespread adoption of remote access or cloud computing.

Mentioned in relation to Stan Po's perspective on AI in robotics.

An autonomous vehicle company whose operational figures and vehicle costs are discussed in the context of the self-driving industry's economic viability.

Mentioned alongside Josh Alperin regarding issues with purchasing and testing large batches of GPUs.

Mentioned in the context of discussing hardware issues, specifically with GPUs not performing as expected.

Associated with Stanford and a startup in physical intelligence, focused on diffusion-inspired path planning.

Formerly at Google AI and now at 1X, he jokes with Erik Schluntz about switching between AI and robotics fields and mentions hardware/supply chain complaints.

Head of Claude character at Anthropic, who uses computer use for generating research ideas during her lunch breaks.

From Dusty Robotics, who has a view that AI vision might not be the primary workhorse in robotics.

More from Latent Space

View all 223 summaries 42 min

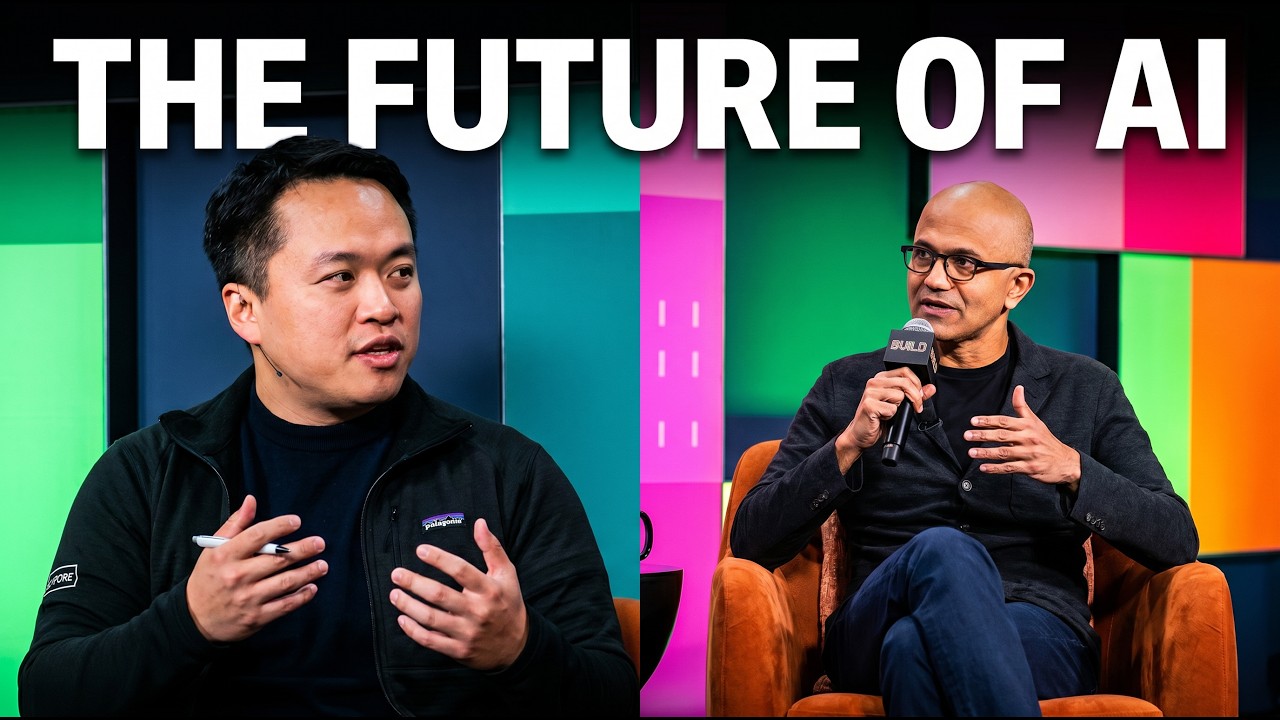

42 minSatya Nadella on AI: @NoPriorsPodcast x Latent Space Crossover Special at Microsoft Build 2026

94 min

94 minScaling Past Informal AI - Carina Hong, Axiom Math

85 min

85 minGitHub’s Agent Era: 14x Commits, 200M Developers, Copilot’s Next Act — Kyle Daigle

105 min

105 minInside xAI: Building Grok Imagine in 3 Months, Videogen vs World Models, and Video Agents— Ethan He

Ask anything from this episode.

Save it, chat with it, and connect it to Claude or ChatGPT. Get cited answers from the actual content — and build your own knowledge base of every podcast and video you care about.

Get Started Free