Key Moments

The Most Important Algorithm Of All Time

Want to know something specific about what's covered?

We've already dissected every moment. Ask and we will deliver (with timestamps).

Key Moments

The Fast Fourier Transform's (FFT) development could have curbed the nuclear arms race by enabling detection of underground tests.

Key Insights

The Fast Fourier Transform (FFT) is crucial for signal processing in modern technology, from Wi-Fi to video streaming.

The FFT's origin is tied to efforts to detect covert nuclear weapons tests, potentially averting the nuclear arms race.

Early attempts at a comprehensive nuclear test ban in the 1950s failed due to the inability to reliably detect underground tests.

The FFT drastically reduces the computational complexity of Discrete Fourier Transforms, making real-time signal analysis feasible.

Carl Friedrich Gauss independently discovered elements of the FFT algorithm in 1805 but it remained unpublished and obscure.

The FFT's widespread application revolutionized fields from image compression to scientific research, impacting modern life significantly.

THE EVERYDAY IMPACT OF THE FFT

The Fast Fourier Transform (FFT) is an algorithm so ubiquitous that it underpins much of modern digital technology, often operating unseen. Its applications span from the signals used in Wi-Fi and 5G communication to the processing required to watch and listen to videos. Essentially, anytime a signal needs to be analyzed or manipulated, there's a high probability that the FFT is involved, demonstrating its fundamental importance in our technologically driven world.

A UNEXPECTED ORIGIN: THE NUCLEAR ARMS RACE

Beyond its technological applications, the FFT has a fascinating and critical origin tied to international relations and the Cold War. Scientists developed the FFT specifically to address the challenge of detecting covert nuclear weapons tests. Had this algorithm been discovered and widely adopted sooner, it might have provided the necessary verification for a comprehensive test ban treaty, potentially halting or significantly slowing the escalating nuclear arms race between global superpowers.

THE CHALLENGE OF VERIFYING TEST BANS

In the post-World War II era, nations attempted diplomatic solutions to control nuclear proliferation, notably through the Baruch Plan, which the Soviet Union rejected. This led to an arms race marked by extensive nuclear testing, often in remote locations, causing significant environmental and human impact. The desire for a comprehensive Test Ban Treaty in the late 1950s was strong, but a major hurdle emerged: distinguishing underground nuclear detonations from natural earthquakes proved exceedingly difficult, especially with the Soviets' refusal of on-site inspections.

DECONSTRUCTING SIGNALS: THE FOURIER TRANSFORM

To overcome the detection challenge, scientists employed the Fourier Transform, a mathematical tool that decomposes complex signals into a sum of simple sine waves of various frequencies and amplitudes. This allows for the analysis of signals, like seismic vibrations from underground explosions, by identifying the unique frequency components. While the concept is powerful, the traditional Fourier Transform requires an immense amount of computation, especially for the discrete, finite digital data captured by instruments like seismometers.

THE BREAKTHROUGH: THE FAST FOURIER TRANSFORM

The computational burden of the Discrete Fourier Transform (DFT) was prohibitive for real-time analysis. In 1963, mathematicians Richard Garwin and John Tukey developed the Fast Fourier Transform (FFT), a revolutionary algorithm that dramatically reduced the number of calculations required. Instead of requiring 'n squared' operations for 'n' data points, the FFT uses 'n log n' operations. This breakthrough, achieved through clever exploitation of symmetries in calculations, transformed a multi-year computation into one that could be done in minutes, making advanced signal analysis practical.

GAUSS'S EARLY DISCOVERY AND THE FFT'S IMPACT

Remarkably, elements of the FFT algorithm were independently discovered by Carl Friedrich Gauss in 1805 but remained unpublished and obscure. Had Gauss published his findings, the FFT might have emerged a century earlier. Following Cooley and Tukey's 1965 publication, the FFT's adoption was rapid and transformative. Its efficiency enabled innovations in data compression (essential for images and video you watch today), radar, sonar, medical imaging, and countless other scientific and engineering fields, cementing its status as one of history's most important algorithms.

THE FFT'S LEGACY AND COUNTING CAREER HOURS

The profound, albeit often invisible, impact of the FFT highlights how scientific and technological advancements can reshape global affairs and daily life. This idea of cumulative impact over time is echoed in the concept of '80,000 hours' – the approximate number of hours spent in a career. The sponsor, 80,000 Hours, uses this to encourage individuals to consider how they can best utilize their working lives to make a positive difference, offering resources to help find fulfilling careers that address pressing global issues and leverage impactful work.

Mentioned in This Episode

●Supplements

●Software & Apps

●Organizations

●People Referenced

Common Questions

The Fast Fourier Transform (FFT) is an efficient algorithm for decomposing a signal into its constituent sine waves. It has revolutionized signal processing and is used in everything from radar to watching this video.

Topics

Mentioned in this video

Mathematician who called the FFT the most important numerical algorithm of our lifetime.

Mathematician who, along with Richard Garwin, debated seismometer superiority and was later seen working on Discrete Fourier Transforms, leading to the FFT.

Mathematician who published on Fourier analysis in 1807, predating the widespread understanding of Gauss's earlier, similar work.

An IBM researcher who, with Richard Garwin's request (though Garwin initially gave a false reason), programmed the FFT algorithm.

An algorithm used for signal processing, crucial for detecting nuclear tests, compressing data, and many other applications. Its efficiency revolutionizes computation.

A method to decompose discrete, finite data into its constituent frequencies. It requires N-squared computations, making it too slow for large datasets.

More from Veritasium

View all 97 summaries 30 min

30 minHow Does Google Maps Actually Work?

22 min

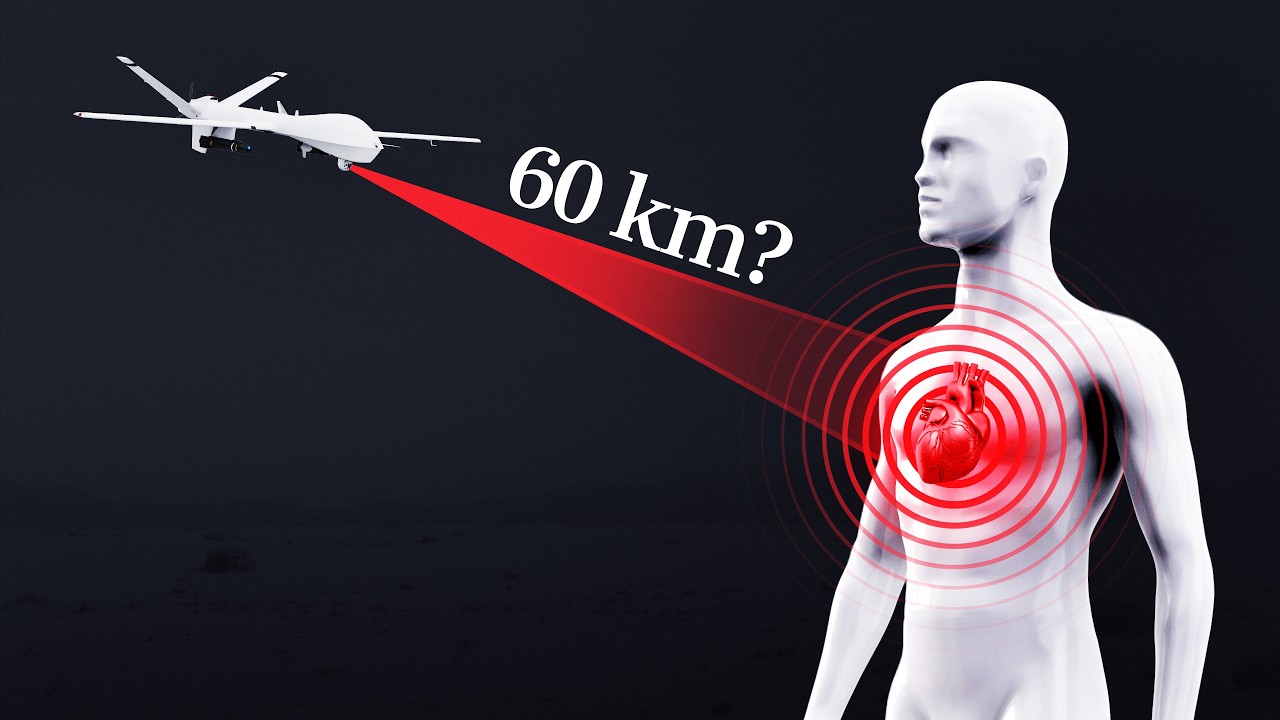

22 minCan a quantum sensor detect your heartbeat from 60 km away?

34 min

34 minThe disaster I never imagined having to worry about

27 min

27 minCan you steal $10,000 from a locked iPhone?

Ask anything from this episode.

Save it, chat with it, and connect it to Claude or ChatGPT. Get cited answers from the actual content — and build your own knowledge base of every podcast and video you care about.

Get Started Free