Key Moments

The Information Apocalypse: A Conversation with Nina Schick (Episode: #220)

Key Moments

Deepfakes and AI-generated media are leading to an information apocalypse, eroding trust and reality.

Key Insights

AI-generated synthetic media, including deepfakes, is rapidly advancing, making it difficult to distinguish real from fake content.

This technology democratizes the creation of highly realistic fake media, posing a significant threat to truth and trust in institutions.

Foreign actors, particularly Russia, have a history of sophisticated information warfare, exploiting societal divisions, especially racial ones.

The architecture of social media amplifies misinformation by promoting engagement over accuracy, creating echo chambers and polarization.

Combating the information apocalypse requires a multi-faceted approach including technological solutions, societal resilience, and digital education.

The erosion of trust in media and institutions, coupled with the rise of synthetic media, creates a dangerous environment where reality itself is contested.

THE RISE OF SYNTHETIC MEDIA AND DEEP FAKES

The conversation centers on the alarming rise of synthetic media, which is any media—images, video, or text—generated by artificial intelligence. This technology is rapidly advancing, moving beyond Hollywood's capabilities to a point where convincing fake human faces can be generated effortlessly, as demonstrated by "this person does not exist.com." The implications are profound, as AI can now synthesize voices and digital likenesses, democratizing the creation of highly realistic fake content. This capability, while having commercial applications, is also the most potent form of disinformation, capable of transforming how we perceive reality.

THE DEMOCRATIZATION OF DISINFORMATION

The alarming aspect of synthetic media is its increasing accessibility. While advanced deepfakes currently require some technical knowledge, the trend points towards easily usable apps and software that will enable almost anyone to create sophisticated fake content. This poses a significant threat because the barriers to entry are diminishing rapidly. The ease with which content can be generated means that malicious actors, or even individuals with less harmful intentions, can flood the information ecosystem with believable falsehoods, making it increasingly difficult for the public to discern truth from fiction.

HISTORICAL CONTEXT OF RUSSIAN INFORMATION WARFARE

Nina Schick highlights Russia's long history of "active measures" and information warfare, dating back to the Cold War. The tactics have evolved with technology, particularly through social media influence operations. Examples given include the denial of the annexation of Crimea, the manipulation of narratives around the Syrian refugee crisis to divide Europe, and sophisticated interference in the 2016 US election. These operations involve creating fake online personas and communities to sow discord and distrust, often disproportionately targeting specific demographics like the African-American community.

EXPLOITING SOCIETAL DIVISIONS AND ERODING TRUST

A key strategy employed by adversaries is the exploitation of existing societal divisions, particularly racial tensions. The podcast references historical Soviet disinformation campaigns, such as the lie that AIDS was a bio-weapon created to harm Black people, which still resonates today. In contemporary elections, influence operations have focused on exacerbating identity politics and creating alienation among various groups. The goal is not just to misinform but to foster a deep-seated cynicism and break people's commitment to seeking truth, leading to an "epistemological breakdown" and a tuning out from civic engagement.

THE FAILURE OF INSTITUTIONS AND THE ROLE OF SOCIAL MEDIA

Schick emphasizes that the current information ecosystem has become deeply corrupted, a process only accelerated by social media's architecture. The business model, driven by engagement and ad revenue, often selects for sensationalism and conflict over accuracy. This has led to a collapse of trust in traditional institutions, including journalism and government. The widespread inability to agree on basic facts or even the nature of problems, such as foreign interference, is a symptom of this pervasive information disorder, making it difficult to identify and address existential risks.

POTENTIAL SOLUTIONS AND THE URgency of ACTION

Addressing the impending 'information apocalypse' requires a multi-pronged approach. Technical solutions include developing AI for detecting deepfakes and embedding authenticity watermarks into media at the hardware level. However, the adversarial nature of AI means detectors constantly lag behind generative models. Crucially, societal resilience must be built through widespread digital literacy education. The conversation underscores that while technology facilitates disinformation, the root problem is human intent and societal vulnerabilities. There is an urgent need to establish ethical frameworks and educate the public, as the window for meaningful action is closing rapidly.

Mentioned in This Episode

●Supplements

●Software & Apps

●Companies

●Organizations

●Books

●Studies Cited

●Concepts

●People Referenced

Common Questions

Deep fakes are synthetic media (images, audio, video) generated by AI. They pose a significant problem because they can be used for misinformation and disinformation, making it increasingly difficult to discern reality and trust information, potentially leading to societal breakdown.

Topics

Mentioned in this video

Actor in 'The Irishman' whose de-aging in the film was discussed in contrast to newer, faster AI technologies.

Actor in 'The Irishman' whose de-aging in the film was discussed in contrast to newer, faster AI technologies.

Author and broadcaster specializing in technology and AI's impact on society; advised global leaders and wrote the book 'Deep Fakes'.

University where Nina Schick holds a degree.

Former US Vice President and President, advised by Nina Schick on how the 2020 election could be impacted by emerging threats.

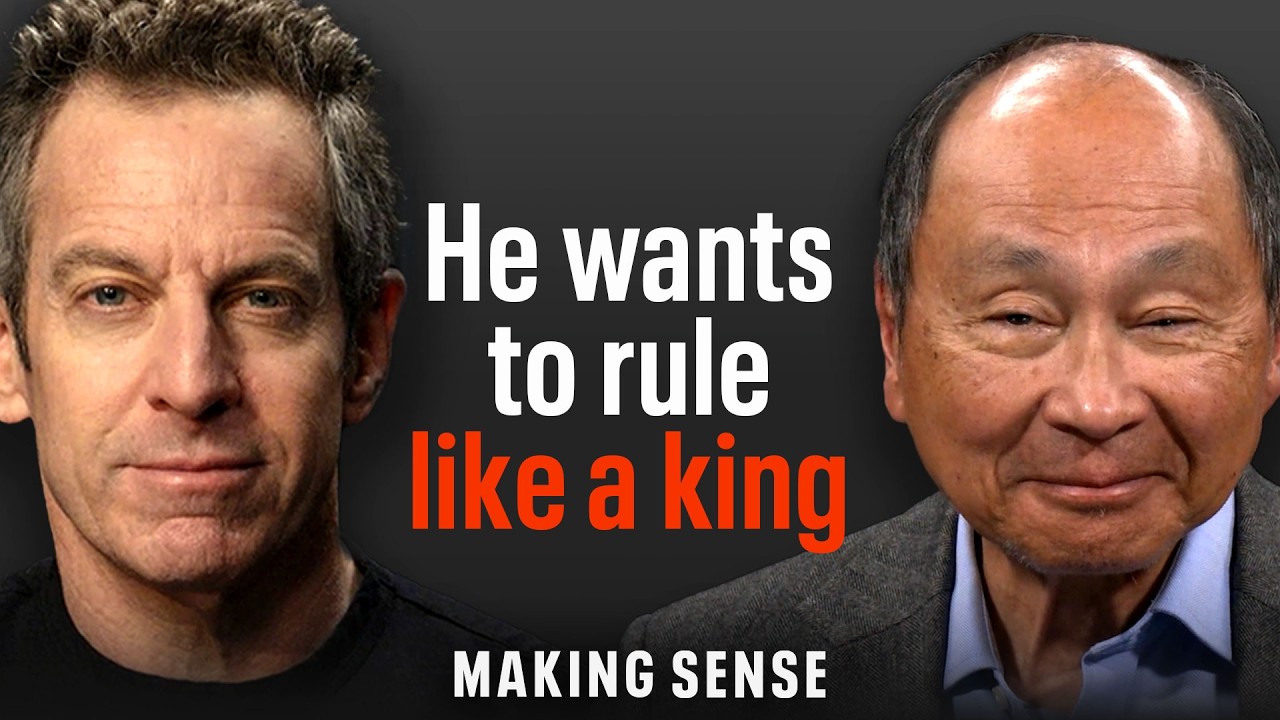

Host of the Making Sense podcast, conversational partner with Nina Schick.

Governor of Michigan, who was Plotting to be kidnapped by a militia group in an incident related to political extremism.

Social movement discussed in relation to Russian fabricated Facebook groups and fake news networks designed to sow division.

Russian state-sponsored television network reported to be the most watched news channel on YouTube.

Media company where Nina Schick is a regular contributor.

Russian agency responsible for social media influence operations, including those targeting the 2016 and 2020 US elections.

Media company where Nina Schick is a regular contributor.

North Atlantic Treaty Organization; Nina Schick worked with the former Secretary General of NATO.

University where Nina Schick holds a degree.

Political and economic union impacted by the migrant crisis, which Russia allegedly weaponized through information operations.

Media company where Nina Schick is a regular contributor.

Media company where Nina Schick is a regular contributor.

Company working on the Content Authenticity Initiative to embed watermarks and prove media provenance.

Video platform where many early synthetic media examples have emerged, and where RT is a highly watched news channel.

Country noted for becoming more like Russia in its pursuit of disinformation campaigns in Western information spaces.

Country where Russia waged an information war during its annexation of Crimea and intervention in eastern Ukraine.

Country discussed for its history of 'active measures' and information warfare, including election interference and exploitation of societal divisions.

Region annexed by Russia, a key event discussed in the context of Russian information warfare.

Country where Russia stepped up bombardment of civilians, contributing to a mass migration crisis in Europe.

More from Sam Harris

View all 298 summaries 28 min

28 minMichael Pollan on Consciousness, Psychedelics, and the Limits of Neuroscience

22 min

22 minWhy the Stock Market Has Become a Casino

61 min

61 minUNRAVELING THE DREAM (A New Documentary Executive Produced by Sam Harris)

20 min

20 minFrancis Fukuyama: The End of History Was Never What You Think

Ask anything from this episode.

Save it, chat with it, and connect it to Claude or ChatGPT. Get cited answers from the actual content — and build your own knowledge base of every podcast and video you care about.

Get Started Free