Key Moments

Belief & Identity: A Conversation with Jonas Kaplan (Episode #261)

Key Moments

Neuroscience of belief explains why changing minds is hard due to identity, emotion, and cognitive biases.

Key Insights

Belief flexibility is crucial for societal cooperation and scientific progress.

The continued influence effect shows that misinformation, once believed, can persist even after correction.

The illusory truth effect suggests repetition increases belief in a statement, even if false.

The backfire effect, where corrections strengthen beliefs, is likely context-dependent and less common than initially thought.

Emotions play a significant role in belief formation and resistance to change, often tied to identity.

Recognizing our own psychological biases and emotional responses is key to improving open-mindedness.

THE CRITICAL ROLE OF BELIEF FLEXIBILITY

Belief flexibility is fundamental to human cooperation and societal functioning. In a democracy, the ability to change minds through conversation and evidence is essential. When persuasion fails, the alternative is coercion, often leading to conflict. For scientists, belief revision based on new evidence is the bedrock of the scientific method. Understanding why this process is often impeded is crucial for navigating personal and collective challenges, especially in an era rife with misinformation.

THE PERSISTENCE OF MISINFORMATION: CONTINUED INFLUENCE AND ILLUSORY TRUTH

Even when corrected, misinformation can continue to influence our thinking. This is known as the continued influence effect. For example, in studies about a warehouse fire, participants continued to attribute the fire to preventable causes like flammable materials, even after being told those materials weren't present. Similarly, the illusory truth effect demonstrates that repeated exposure to a statement, even a false one, increases the likelihood of believing it. This is partly due to a cognitive 'truth bias,' where accepting a proposition is faster and more automatic than rejecting it.

THE BACKFIRE EFFECT: A DEBATED PHENOMENON

The backfire effect suggests that attempts to correct false beliefs can sometimes strengthen them, particularly among those with pre-existing biases. A study involving the justification for the Iraq War found that conservatives presented with evidence debunking the presence of WMDs became even more convinced of their existence. However, subsequent large-scale studies have struggled to replicate this effect consistently, indicating it might be context-dependent or less prevalent than popularly believed. The difficulty in establishing a robust backfire effect suggests focusing on why corrections fail altogether.

IDENTITY AND EMOTION AS BARRIERS TO BELIEF CHANGE

Resistance to changing deeply held beliefs is often rooted in their connection to our sense of self and social identity. When a belief is challenged, it can feel like a threat to our personal or group identity, triggering emotional responses like aversion or contempt. Neuroimaging studies show heightened activity in the amygdala and insula, regions associated with emotional salience, when individuals resist belief change. This emotional charge can override rational consideration, making it difficult to accept new evidence that conflicts with core aspects of who we believe we are.

THE ROLE OF EMOTION IN REASONING

Emotions are intricately linked with cognition, not separate from it, as classical views might suggest. While relying solely on feelings can lead us astray, emotions also provide crucial information, such as the feeling of certainty or uncertainty, which guides our thought processes. However, these emotional states can become 'uncoupled' from valid reasoning. For instance, individuals with specific brain injuries may lack the emotional 'signposts' necessary to make knowledge behaviorally relevant, illustrating the indispensable yet potentially misleading role of feelings in decision-making and belief consistency.

WISHFUL THINKING AND THE SCIENTIFIC APPROACH

Wishful thinking, or wanting reality to conform to our desires rather than accepting it as it is, is a significant impediment to clear thinking. The scientific method incorporates mechanisms specifically designed to counteract this bias, such as rigorous testing and peer review. Recognizing when we are motivated to believe something is true because we *want* it to be true is a critical first step towards intellectual honesty. This is particularly challenging in social contexts, where changing a belief might jeopardize relationships, social standing, or group affiliations, thus raising the stakes of belief revision.

THE 'FIREPLACE DELUSION' AS AN EXAMPLE OF SENTIMENTAL BELIEF

The concept of the 'fireplace delusion' illustrates how sentimental attachments can override factual evidence. Many people have a nostalgic and positive association with burning wood in fireplaces, perceiving it as cozy and traditional. Despite scientific evidence demonstrating the significant health risks and air pollution associated with wood smoke—comparable to engine fumes—people often resist acknowledging these downsides. This attachment may stem from deep-seated evolutionary or cultural narratives, highlighting how ingrained emotions and associations can make us resistant to readily available, albeit unpleasant, truths.

SELF-REFLECTION AND COGNITIVE FLEXIBILITY

Ultimately, improving our capacity for belief change hinges on self-awareness. It is more fruitful to focus on making ourselves more open-minded than on persuading others. This involves recognizing when our emotional reactions—such as anger, fear, or disgust—are being triggered by certain ideas, and understanding that these feelings can be mistaken for evidence of truth. By noticing when this psychological machinery is invoked, we can begin to disengage emotional impulses from epistemic judgment, fostering greater cognitive flexibility and a more accurate understanding of the world.

Mentioned in This Episode

●Organizations

●Books

●Studies Cited

●Concepts

●People Referenced

Common Questions

Key effects include the continued influence effect, where misinformation persists even after correction, and the illusory truth effect, where repetition makes false information seem more true. These biases make it difficult to update our beliefs with new evidence.

Topics

Mentioned in this video

University where Jonas Kaplan works as an associate professor at the Brain and Creativity Institute.

One of the outlets that covered the 2016 paper, though the speaker notes limited press coverage.

Used the presence of weapons of mass destruction in Saddam's stockpile as a justification for the Iraq War, as discussed in the backfire effect study.

Cognitive neuroscientist at USC and associate professor at the Brain and Creativity Institute. Co-author on research about belief change.

Podcast guest whose work on isolated consciousness in the brain is referenced.

Co-author of a 2010 study on the backfire effect related to the Iraq War.

Co-author of the 2016 paper on the neural correlates of maintaining political beliefs.

Researcher who conducted a large study in 2018 questioning the backfire effect.

Anthropologist whose work on the 'Bush people' of Africa and their nighttime storytelling around fires is cited.

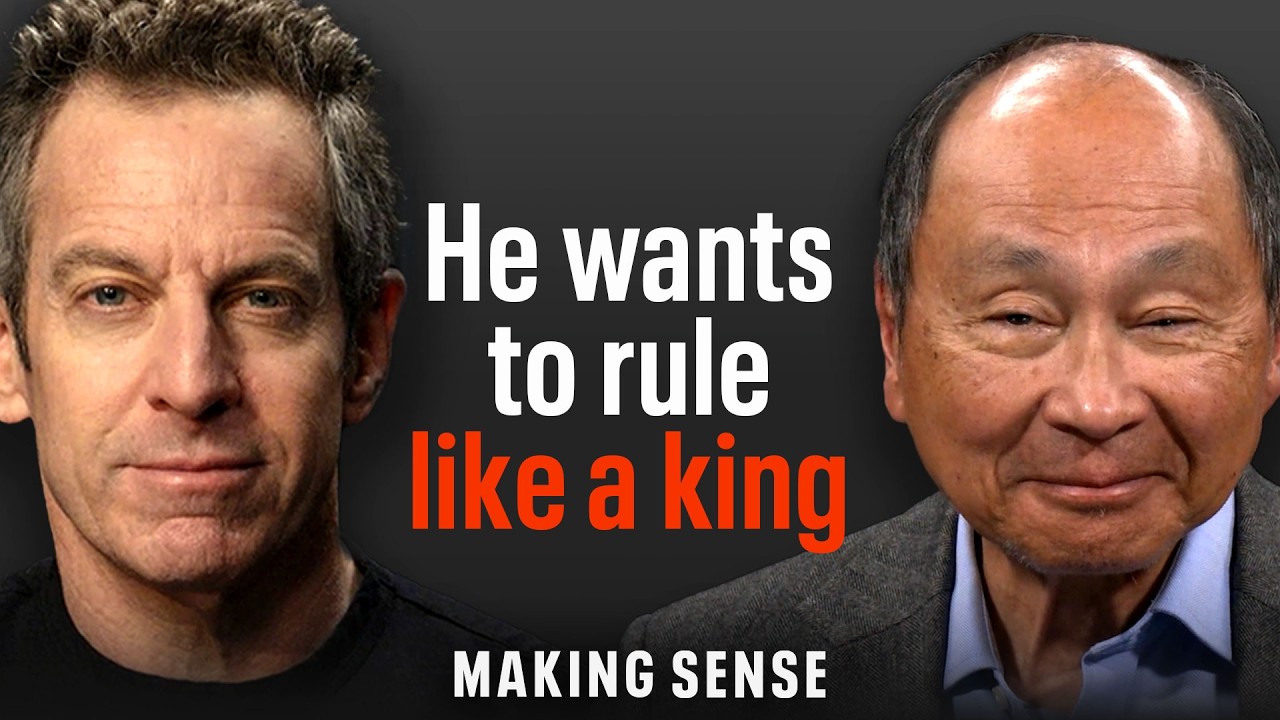

Host of the Making Sense podcast, discussing belief and identity with Jonas Kaplan.

Founder of the Brain and Creativity Institute at USC. His work on the split between reason and emotion is mentioned.

Co-author of a 2010 study on the backfire effect related to the Iraq War.

Researcher who conducted a large study in 2018 questioning the backfire effect.

More from Sam Harris

View all 298 summaries 28 min

28 minMichael Pollan on Consciousness, Psychedelics, and the Limits of Neuroscience

22 min

22 minWhy the Stock Market Has Become a Casino

61 min

61 minUNRAVELING THE DREAM (A New Documentary Executive Produced by Sam Harris)

20 min

20 minFrancis Fukuyama: The End of History Was Never What You Think

Ask anything from this episode.

Save it, chat with it, and connect it to Claude or ChatGPT. Get cited answers from the actual content — and build your own knowledge base of every podcast and video you care about.

Get Started Free