Key Moments

The Strange Math That Predicts (Almost) Anything

Key Moments

Russian math feud birthed Markov chains, revolutionizing prediction in bomb design, search engines, and AI.

Key Insights

A mathematical feud between Pavel Nekrasov and Andrey Markov in early 20th century Russia led to the development of Markov chains.

Markov chains allow for probability calculations in systems where events are dependent, unlike traditional probability theory focused on independent events.

The Monte Carlo method, based on Markov chains, was crucial for the Manhattan Project in calculating neutron behavior for nuclear bomb design.

Google's PageRank algorithm, which revolutionized search engines, is fundamentally a Markov chain applied to the web graph.

Modern AI and large language models heavily rely on Markov chain principles, though advanced concepts like 'attention' enhance their predictive capabilities.

Understanding Markov chains helps explain phenomena from card shuffling randomness to the complex feedback loops in climate change.

A FEUD THAT SHAPED PROBABILITY

Over a century ago, a bitter feud between Russian mathematicians Pavel Nekrasov and Andrey Markov, fueled by political divisions, inadvertently laid the groundwork for modern predictive mathematics. Nekrasov, a proponent of linking probability to free will and divine will, clashed with the atheist Markov, who sought rigorous mathematical explanations. Their dispute centered on the fundamental assumption of independence in probability, particularly concerning the Law of Large Numbers, first proven by Jacob Bernoulli, which states that the average of results from independent trials approaches the expected value as more trials are performed.

MARKOV'S CHAIN: DEPENDENCE AND PREDICTION

Markov challenged Nekrasov's assertion that observing statistical convergence implied underlying independence. To prove that dependent events could also follow the Law of Large Numbers, Markov analyzed the sequence of vowels and consonants in Alexander Pushkin's poem 'Eugene Onegin.' By treating each letter as a 'state' and analyzing transitions between them, he demonstrated that even with dependencies (where the next letter is influenced by the previous), the overall statistics converge. This groundbreaking work introduced the concept of Markov chains, where the future state depends only on the current state, not the entire history.

FROM NUCLEAR BOMBS TO MONTE CARLO

The practical implications of Markov chains became starkly evident during the development of the atomic bomb. Physicist Stanislaw Ulam, while recovering from illness, pondered the probability of winning solitaire games. This led to an insight, refined by John von Neumann, that complex systems like neutron behavior within a nuclear core could be simulated using random sampling. By modeling neutron interactions as a Markov chain and running simulations on early computers like ENIAC, they developed the Monte Carlo method. This statistical approach allowed them to approximate solutions to complex differential equations, crucial for determining the critical mass of fissile material.

REVOLUTIONIZING THE INTERNET'S INFORMATION FLOW

The principles of Markov chains proved instrumental in organizing the burgeoning internet. Google's founders, Larry Page and Sergey Brin, faced the challenge of ranking web pages effectively. They conceptualized the web as a massive Markov chain, where each link is a transition between states (web pages). By analyzing the link structure, they developed the PageRank algorithm, which determines a page's importance based on the quantity and quality of incoming links. This innovative approach allowed Google to provide more relevant search results than competitors that relied solely on keyword frequency, fundamentally changing how people access information online.

PREDICTING TEXT AND THE RISE OF AI

Claude Shannon, the father of information theory, applied Markov chain concepts to predict text. By analyzing sequences of letters and later words, he showed that incorporating more preceding elements significantly improved prediction accuracy. This principle underpins modern applications like Gmail's predictive text feature. Today's advanced AI, including large language models, build upon these foundations, using 'attention' mechanisms to weigh the importance of different parts of the input sequence, allowing for more nuanced and context-aware predictions than simple Markov chains alone.

THE POWER OF 'MEMORYLESS' SYSTEMS AND COMPLEXITIES

The core strength of Markov chains lies in their 'memoryless' property: the future depends only on the present state. This simplification allows complex, real-world systems—from nuclear reactions to language patterns—to be modeled and understood. However, feedback loops, such as those in global warming where increased CO2 leads to higher temperatures, which in turn increase water vapor (a potent greenhouse gas), can create complex positive feedback loops that challenge the predictive power of simple Markov chains. Despite these limitations, Markov chain theory remains a powerful tool for making meaningful predictions in a vast array of dependent systems.

SHUFFLING CARDS AND THE MATH BEHIND IT

The seemingly simple question of how many times a deck of cards needs to be shuffled to achieve randomness can also be analyzed using Markov chains. Each arrangement of the deck is a state, and each shuffle is a step. While intuitive guesses might vary, a standard riffle shuffle requires approximately seven shuffles to randomize a 52-card deck. More complex or less efficient shuffling methods, like a simple overhand shuffle, can require thousands of repetitions. This illustrates how even everyday processes can be understood through the lens of probabilistic modeling.

Mentioned in This Episode

●Software & Apps

●Companies

●Books

●Concepts

●People Referenced

Common Questions

The Law of Large Numbers states that as the number of independent trials increases, the average outcome will approach the expected value. It was first proven by Jacob Bernoulli in 1713.

Topics

Mentioned in this video

The principle stating that the average outcome gets closer to the expected value as more independent trials are run. First proven by Jacob Bernoulli.

Google's original search algorithm that ranks web pages based on the number and quality of inbound links, treating them as endorsements.

A computational technique that uses random sampling to obtain numerical results, born from Ulam's and Von Neumann's work on simulating complex systems like nuclear bombs.

More from Veritasium

View all 96 summaries 22 min

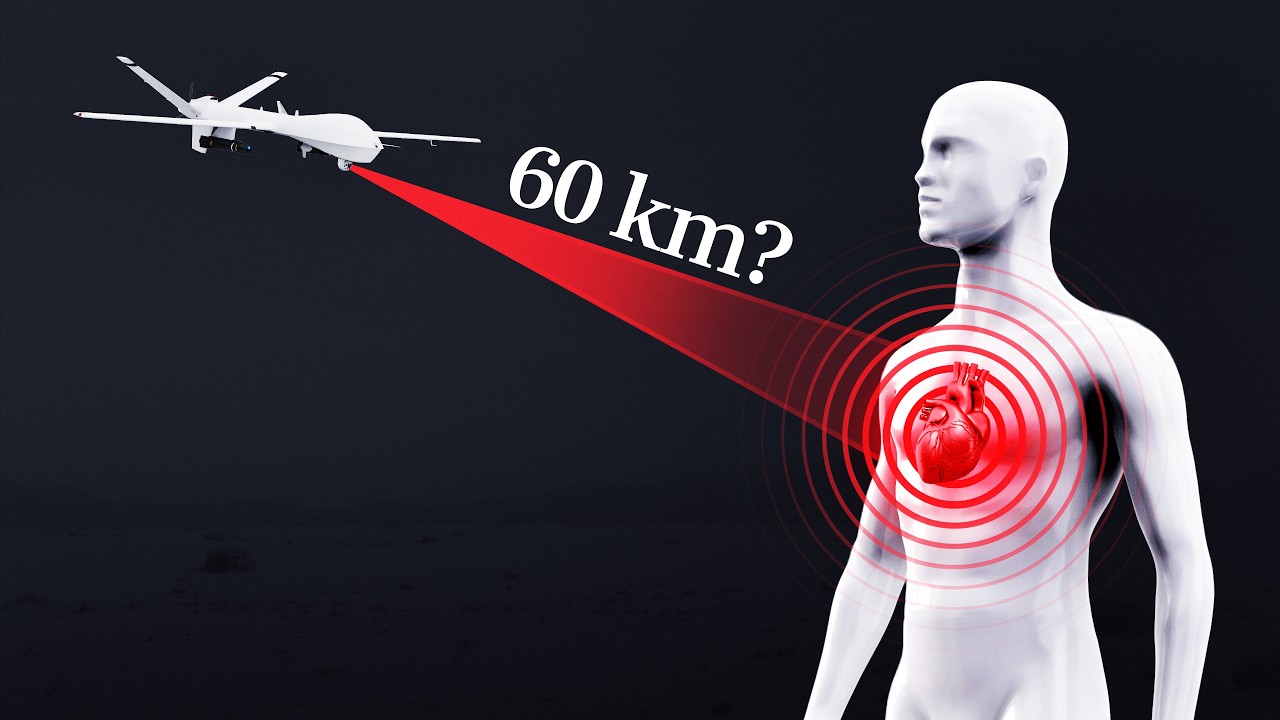

22 minCan a quantum sensor detect your heartbeat from 60 km away?

34 min

34 minThe disaster I never imagined having to worry about

27 min

27 minCan you steal $10,000 from a locked iPhone?

59 min

59 minWhy Is CERN Making Antimatter?

Ask anything from this episode.

Save it, chat with it, and connect it to Claude or ChatGPT. Get cited answers from the actual content — and build your own knowledge base of every podcast and video you care about.

Get Started Free