Key Moments

The State of Silicon and the GPU Poors - with Dylan Patel of SemiAnalysis

Want to know something specific about what's covered?

We've already dissected every moment. Ask and we will deliver (with timestamps).

Key Moments

Dylan Patel discusses the GPU rich vs. GPU poor divide, AI hardware, and the semiconductor industry.

Key Insights

The semiconductor industry is experiencing a significant boom driven by AI, creating a "GPU rich" and "GPU poor" divide.

Infrastructure efficiency and custom hardware (like Google's TPUs) are becoming increasingly critical for large tech companies.

While NVIDIA dominates the GPU market, alternatives and custom chips are emerging, though facing significant challenges.

The key metrics for AI hardware performance are shifting from Model FLOPs Utilization (MFU) in training to Model Bandwidth Utilization (MBW) in inference.

The semiconductor supply chain is highly complex and fragmented, making it difficult to replicate or rapidly expand capacity.

Open-source AI models and research are crucial for democratizing access and fostering innovation, despite the compute advantages of large labs.

THE Rise of SEMICONDUCTORS IN THE AI ERA

Dylan Patel highlights the semiconductor industry's transformation, fueled by the AI revolution, leading to a stark division between the "GPU rich" and "GPU poor." He notes his firm, SemiAnalysis, leverages deep industry knowledge to analyze hardware from design to manufacturing. Patel observes that historically niche areas like high-performance computing are now central, with AI being its most prominent and successful manifestation. This shift underscores the increasing importance of efficient infrastructure and custom hardware solutions, a space where giants like Google, with their TPUs, have a significant advantage over competitors like Microsoft Azure and AWS, who are catching up with their own infrastructure innovations.

INFRASTRUCTURE AND COST STRUCTURE OF AI

The cost structure of AI is dramatically different from traditional SaaS businesses. While R&D personnel costs may be lower, the cost of goods sold, primarily driven by operational infrastructure, is significantly higher. Patel emphasizes that this makes infrastructure efficiency paramount for AI companies. He touches upon the immense computational cost of training large models like GPT-4, estimating it to be in the hundreds of millions of dollars. This reinforces the idea that for hyperscalers, optimizing infrastructure is not just about cost savings but also a critical competitive advantage.

THE GPU RICH VS. GPU POOR DYNAMIC

Patel elaborates on the "GPU rich" and "GPU poor" concept, inspired by Google's Gemini. The "rich" are those with access to massive computational resources, primarily large tech companies like Google, OpenAI, and Microsoft. The "poor" are those without such access, including many startups and the broader open-source community. He points out the sheer volume of high-end GPUs being manufactured (hundreds of thousands per quarter) but notes their concentration among a few major players. This disparity raises questions about what tasks are most impactful given available compute, discouraging focus on less critical areas like batch-one inference on expensive hardware or gaming GPU fine-tuning.

TPUS, PYTORCH, AND HARDWARE INNOVATION

Google's TPUs are a significant player. While TensorFlow was initially optimized for them, PyTorch, through libraries like PyTorch/XLA, is now making TPUs accessible and effective for external users. Patel acknowledges Google's improved focus on external customers for its TPU V5 offerings, which are positioned as a cost-effective compute solution. Despite PyTorch's dominance, he highlights innovations in compilers and frameworks like Triton and Palas, aiming to abstract away low-level hardware complexities and enable broader innovation across different hardware platforms.

UTILIZATION METRICS: MFU VS. MBU

A crucial distinction is made between training and inference hardware utilization. During training, Model FLOPs Utilization (MFU) is key, measuring how effectively the hardware's computational power is used. However, for inference, especially at low batch sizes like one, Model Bandwidth Utilization (MBW) becomes the primary bottleneck. Patel explains that the ratio of flops to memory bandwidth on GPUs is widening due to semiconductor scaling, exacerbating this inference-side challenge. Achieving high MBW is critical for low-latency, cost-effective inference, and current libraries like Hugging Face's are criticized for their inefficiency in this regard.

THE CHALLENGES OF ALTERNATIVE HARDWARE

While NVIDIA dominates the GPU market, alternatives from AMD, Intel, and specialized AI chip startups are emerging. These often aim to offer better performance-per-dollar by focusing on more reasonable margins and leveraging newer process nodes. However, they face significant hurdles: a complex and fragmented supply chain, the rapid pace of NVIDIA's innovation, and the immense difficulty of competing across all the necessary hardware design parameters beyond just flops and memory. Patel suggests that for most, betting on established players like NVIDIA GPUs or Google TPUs, or leveraging advanced open-source software, is currently more pragmatic.

THE FUTURE OF AI DEVELOPMENT AND ACCELERATED SCALING

Patel discusses the potential for distributed AI training across multiple data centers, which could overcome single-data center power and chip limitations, exponentially accelerating scaling. He also touches on the role of open-source models and research, emphasizing their importance in democratizing AI and fostering innovation, even for smaller players. The rapid obsolescence of AI models is noted, making investment in fine-tuning older models potentially less viable than focusing on newer architectures or on-device applications. Safety concerns are acknowledged, with a perspective that open innovation, rather than obscurity, may be a better path to alignment.

THE COMPLEXITY OF THE SEMICONDUCTOR SUPPLY CHAIN

The semiconductor supply chain is described as extraordinarily complex and fragmented, involving specialized companies across the globe for everything from chemicals to manufacturing equipment. Replicating this in the US is deemed infeasible in the short to medium term due to the deep interconnectedness and monopolies in specific technological niches. Patel highlights how innovations often arise from this global dissemination of technology and expertise, making international collaboration a de facto necessity for progress, even as geopolitical considerations influence supply chain strategies.

Mentioned in This Episode

●Products

●Software & Apps

●Companies

●Organizations

●Books

●Concepts

●People Referenced

Common Questions

The framework helps categorize entities based on their access to high-end GPU compute. 'GPU Rich' entities are those with substantial access to cutting-edge hardware like NVIDIA's H100s or Google's TPUs, while 'GPU Poor' entities have limited access, often relying on older or less powerful hardware.

Topics

Mentioned in this video

The specific generation of Google's Tensor Processing Unit discussed, particularly for inference.

A critical component for AI hardware, discussed in relation to manufacturing capacity and NVIDIA's strategy.

Dynamic Random-Access Memory discussed in a SemiAnalysis post.

NVIDIA's flagship GPU, serving as a benchmark for performance and cost.

A type of flash storage whose manufacturing process was explained in a SemiAnalysis post.

AMD's GPU, mentioned as a competitor to NVIDIA's offerings.

Intel's AI accelerator chip, mentioned as being potentially better than H100 on paper but facing programming challenges.

Developing AI hardware to compete with NVIDIA, discussed regarding cost and performance.

Discussed for its massive TPU production, infrastructure advantages, and its role in AI hardware.

Mentioned for its libraries being inefficient for inference and its leaderboards being gamed.

Discussed as a major AI lab with massive compute needs and potential for extreme future valuation.

Taiwan Semiconductor Manufacturing Company, mentioned for its fabs and mask production influencing the global semiconductor supply chain.

Acquired by Intel, previously founded by Naven Rawal.

Investing in internal chips and potentially impacting NVIDIA's pricing.

An AI hardware startup whose strategy of prioritizing on-chip memory (SRAM) is discussed.

Mentioned for acquiring Nirvana, shutting it down, and releasing new AI chips.

A new-age AI hardware startup making rational bets.

Investing in its own chips and exploring alternative hardware suppliers.

A key partner with Google in designing networking components for TPUs.

Acquired by Intel and subsequently shut down.

Formerly a networking company acquired by NVIDIA, discussed in the context of Google's partnership with Broadcom.

Mentioned as a company building boxes, indicating involvement in the AI hardware supply chain.

The dominant player in GPUs, discussed regarding manufacturing capacity, pricing, and future chip releases.

A new-age AI hardware startup making rational bets.

Discussed regarding its product philosophy, lack of rapid iteration in AI models, and potential distribution power.

An Austrian company whose tools are essential for advanced semiconductor manufacturing (e.g., <7nm and <2nm processes).

A Japanese company going public, prompting a SemiAnalysis post on its technology.

Highlighted as a company contributing significantly to open-source AI models.

An AI safety-focused lab that emerged from OpenAI, discussed in the context of accelerating AI development.

Mentioned for bragging about GPU acquisitions and its contributions to open-source AI models.

A technique developed by 'together' for efficient on-device inference, released as open source.

Author of the SemiAnalysis blog, discussing the state of semiconductors and AI hardware.

Author whose work on historical semiconductor innovation and the 'paranoid' philosophy is recommended.

Mentioned as having previously discussed mixture of experts on a podcast.

CEO of NVIDIA, credited with pivoting the company towards AI.

Founder of MosaicML, acquired by Intel.

Author of the internal Google document 'Mina Eats the World', predicting the dominance of LLMs in compute.

Mentioned for his research on asynchronous training and the 'Swarm' paper.

A large language model discussed in the context of inference performance, memory bandwidth requirements, and latency.

A version of PyTorch discussed in SemiAnalysis's recommended reading.

An earlier Google LLM discussed in the context of N. Shazir's influential internal document predicting LLM dominance.

Mentioned as an open-source model where 'crazy stuff' is being done, especially for adult content generation.

Apple's voice assistant, predicted to potentially use GPT-3.5 level capabilities in the future.

Discussed in relation to the scale of compute required for training and the cost estimation.

Mentioned as low-level programming that ideally users wouldn't have to write directly.

An open-source model whose relevance has quickly diminished, illustrating rapid model depreciation.

The dominant deep learning framework, discussed in the context of TPU compatibility and external user adoption.

A company working on running models on devices, mentioned for its open-source contributions.

A large open-source model whose parameter count is compared to GPT-3.5.

A future OpenAI model, discussed in terms of potential fundraising and impact.

Mentioned in the context of AI software costs and comparison to traditional SAS businesses.

OpenAI's image generation model, contrasted with open-source capabilities.

Used as a benchmark for model quality and discussed regarding the difficulty of open-source models matching its capabilities.

Mentioned as a model that is no longer relevant due to newer, more capable models.

Google's LLM, discussed in the context of its training, inference, and dominance in Google's data centers.

More from Latent Space

View all 224 summaries 78 min

78 minWhen AI Agents Run Businesses — Lukas Petersson and Axel Backlund of Andon Labs

94 min

94 minScaling Past Informal AI - Carina Hong, Axiom Math

42 min

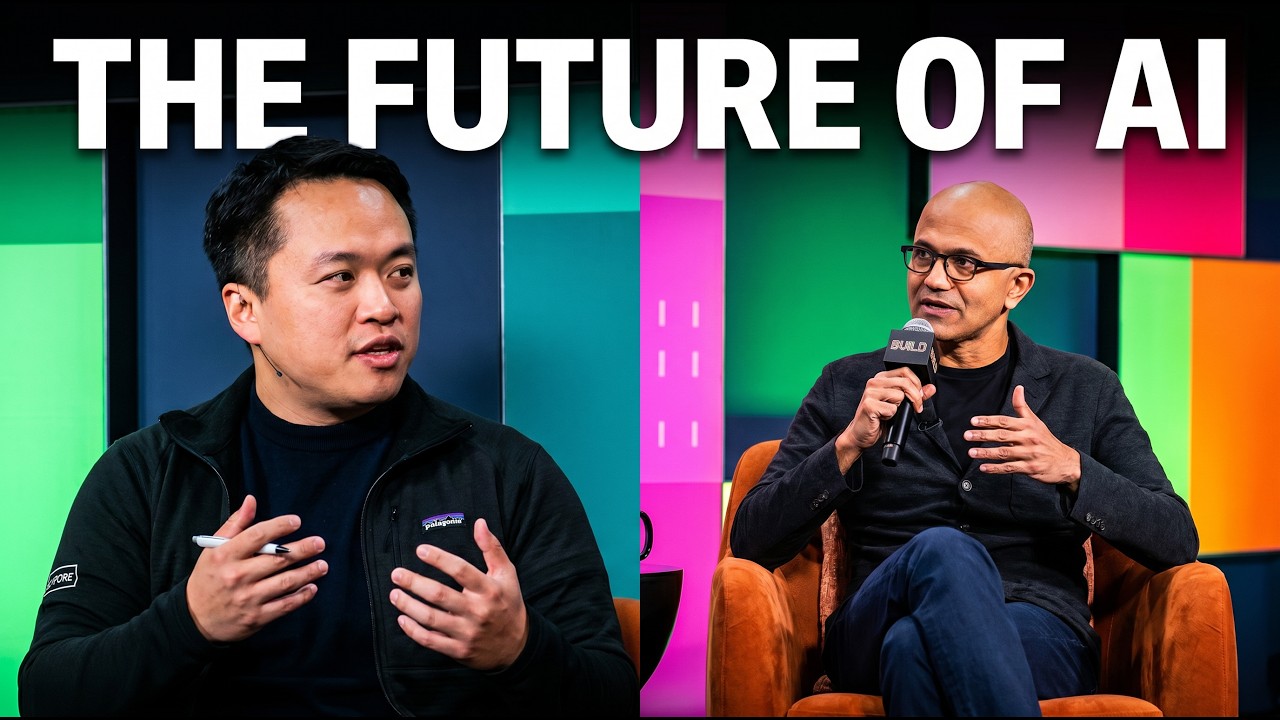

42 minSatya Nadella on AI: @NoPriorsPodcast x Latent Space Crossover Special at Microsoft Build 2026

85 min

85 minGitHub’s Agent Era: 14x Commits, 200M Developers, Copilot’s Next Act — Kyle Daigle

Ask anything from this episode.

Save it, chat with it, and connect it to Claude or ChatGPT. Get cited answers from the actual content — and build your own knowledge base of every podcast and video you care about.

Get Started Free