The Alignment Problem

A book by Brian Christian that looks at AI safety questions from an objective, non-AI researcher perspective.

Save the 4 videos on The Alignment Problem to your own pod.

Sign up free to keep building your knowledge base on The Alignment Problem as more episodes are added.

Videos Mentioning The Alignment Problem

An AI Expert Warning: 6 People Are (Quietly) Deciding Humanity’s Future!

The Diary Of A CEO

A book by Brian Christian that looks at AI safety questions from an objective, non-AI researcher perspective.

Countdown to Superintelligence | Sam Harris and Daniel Kokatajlo (Making Sense #420)

Sam Harris

The challenge of ensuring AI systems reliably act in accordance with human intentions and values, especially as AI capabilities increase.

Debating the Future of AI: A Conversation with Marc Andreessen (Episode #324)

Sam Harris

The concern that highly intelligent AI might not share human values or goals, leading to dangerous outcomes.

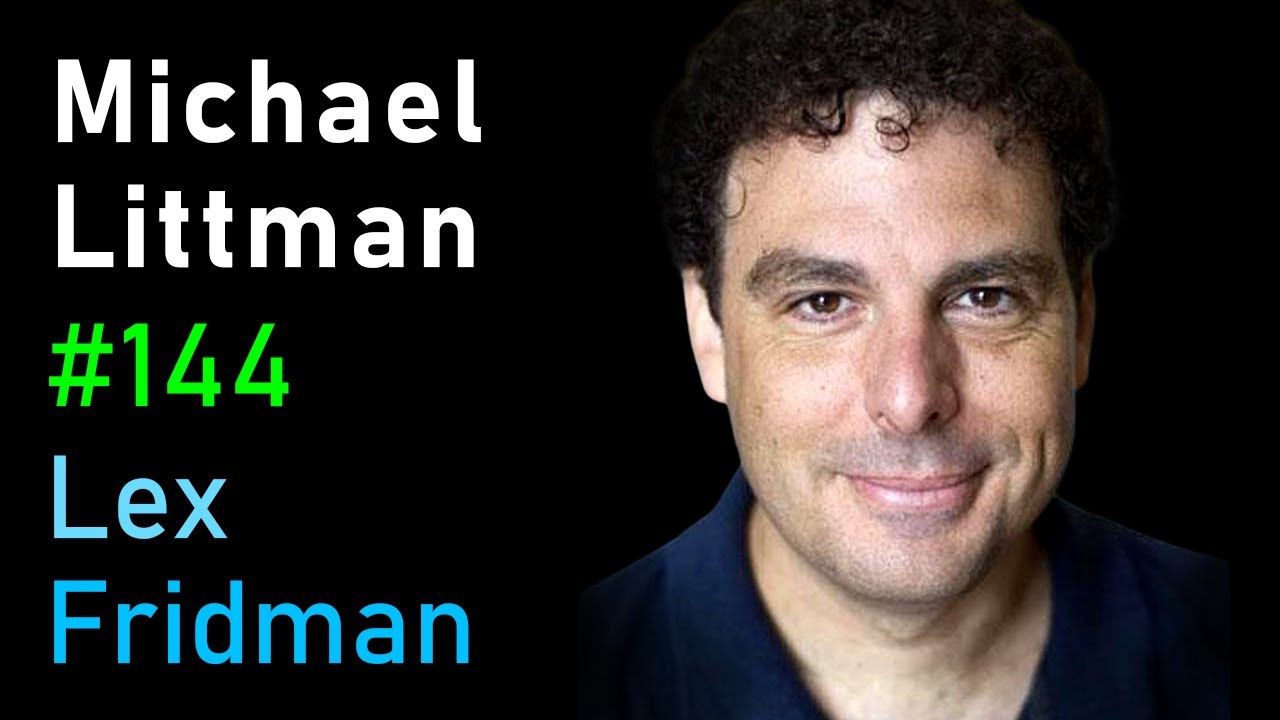

Michael Littman: Reinforcement Learning and the Future of AI | Lex Fridman Podcast #144

Lex Fridman

A book by Brian Christian currently being read by Michael Littman, covering AI fairness, reinforcement learning, and the superintelligence alignment problem.