GPT-2

2019 text-generating large language model

Common Themes

Videos Mentioning GPT-2

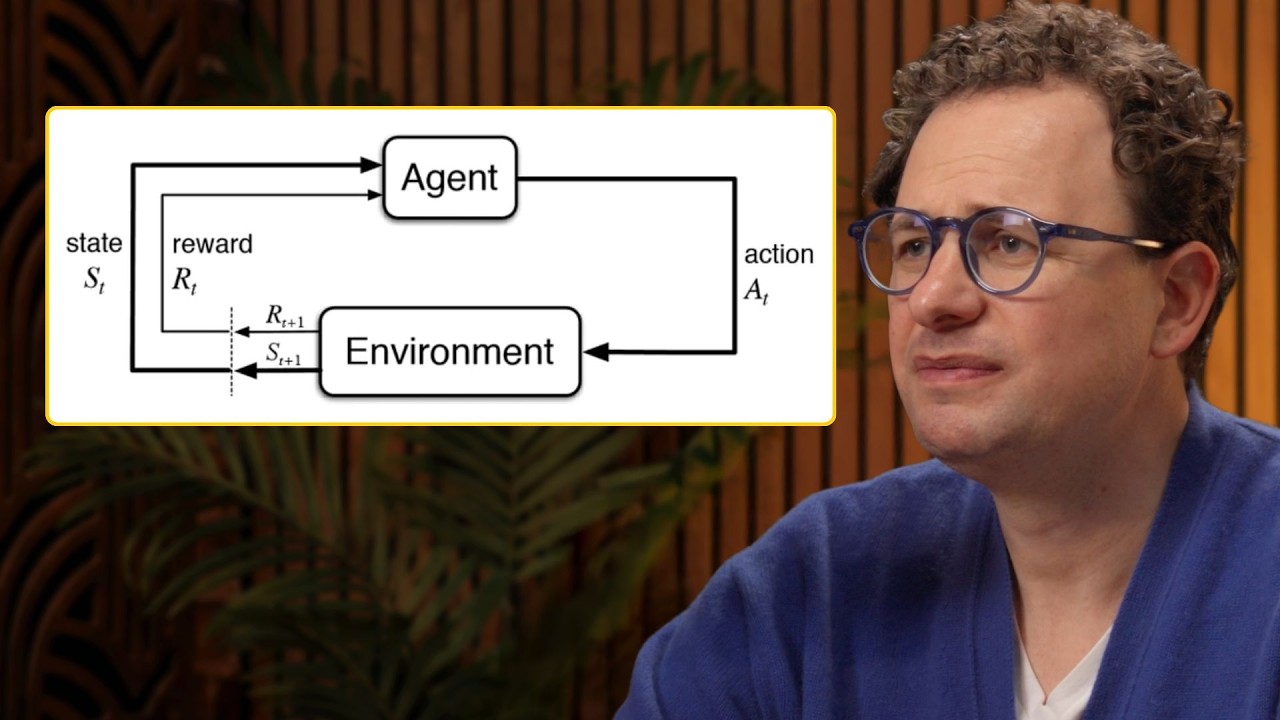

Is RL a dead end? – Dario Amodei

Dwarkesh Clips

OpenAI language model demonstrating broader generalization from internet-scale data

Supervise the Process of AI Research — with Jungwon Byun and Andreas Stuhlmüller of Elicit

Latent Space

An early generative language model used by Elicit in its initial stages of research and product development.

Agents @ Work: Dust.tt — with Stanislas Polu

Latent Space

Mentioned as a previous generation of models in the research path at OpenAI.

Why Google failed to make GPT-3 -- with David Luan of Adept

Latent Space

An earlier version of OpenAI's language model, discussed as a pivotal paper and a demonstration of focused research efforts.

A Comprehensive Overview of Large Language Models - Latent Space Paper Club

Latent Space

An earlier version of OpenAI's GPT models, known for its generative capabilities.

Deep Dive into LLMs like ChatGPT

Andrej Karpathy

Building AGI in Real Time (OpenAI Dev Day 2024)

Latent Space

Mentioned as a point of reference for the exponential growth in AI capabilities, suggesting that GPT-01 represents a similar 'scale moment' in AI development.

llm.c's Origin and the Future of LLM Compilers - Andrej Karpathy at CUDA MODE

Latent Space

A transformer-based language model developed by OpenAI. llm.c was able to train GPT-2 weights, achieving competitive results compared to PyTorch.

Language Agents: From Reasoning to Acting — with Shunyu Yao of OpenAI, Harrison Chase of LangGraph

Latent Space

An earlier version of OpenAI's language models, noted for its size and potential risks at the time.

Is finetuning GPT4o worth it?

Latent Space

An earlier language model from OpenAI, mentioned in the context of the evolution of AI models.

The Origin and Future of RLHF: the secret ingredient for ChatGPT - with Nathan Lambert

Latent Space

An early OpenAI language model, mentioned in the context of the 'Learning to Summarize' experiment where initial RLHF techniques were applied.

Scaling Test Time Compute to Multi-Agent Civilizations — Noam Brown, OpenAI

Latent Space

Mentioned as a model that likely would not have benefited from reasoning paradigms, highlighting the need for a certain baseline capability in models before applying techniques like chain-of-thought.

FlashAttention-2: Making Transformers 800% faster AND exact

Latent Space

An earlier language model from OpenAI, cited as a point where the company recognized the potential of scaling.

Ilya Sutskever: Deep Learning | Lex Fridman Podcast #94

Lex Fridman

A large language model developed by OpenAI, based on the Transformer architecture, with 1.5 billion parameters, trained on a massive dataset of web text, capable of generating highly realistic and coherent text.

Jeremy Howard: fast.ai Deep Learning Courses and Research | Lex Fridman Podcast #35

Lex Fridman

A large language model developed by OpenAI, following GPT, also exemplifying transfer learning in NLP.

Deep Learning State of the Art (2020)

Lex Fridman

An OpenAI language model discussed for its potential dangers, release strategy, and its limitations in common sense reasoning, though noted for impressive text generation.

Oriol Vinyals: DeepMind AlphaStar, StarCraft, and Language | Lex Fridman Podcast #20

Lex Fridman

A large language model by OpenAI, cited as an impressive example of current deep learning capabilities in language generation.

Greg Brockman: OpenAI and AGI | Lex Fridman Podcast #17

Lex Fridman

An advanced language model whose non-release of the full version is discussed as a test case for responsible disclosure in AI, highlighting concerns about fake news and biased content generation.

Imagining Education with Generative AI

MIT Open Learning

Information Theory for Language Models: Jack Morris

Latent Space

An OpenAI model that Jack Morris found interesting, though BERT was more popular at the time.

Ray Kurzweil: Singularity, Superintelligence, and Immortality | Lex Fridman Podcast #321

Lex Fridman

An earlier large-scale language model that did not work as well as its successor, GPT-3, highlighting the exponential growth needed for such models to be effective.

Personal benchmarks vs HumanEval - with Nicholas Carlini of DeepMind

Latent Space

An earlier language model that Carlini initially viewed as a toy before recognizing the practical utility of later models.

Noam Brown: AI vs Humans in Poker and Games of Strategic Negotiation | Lex Fridman Podcast #344

Lex Fridman

A large language model that, along with GPT-3, highlighted the rapid progress in AI and inspired the ambitious Diplomacy project.

Scott Aaronson: Computational Complexity and Consciousness | Lex Fridman Podcast #130

Lex Fridman

Predecessor to GPT-3, mentioned to illustrate the significant advancement in capability of GPT-3 with increased network size and training data.