Transformer

Neural network architecture visualized and explained as the core model type for LLMs.

Common Themes

Videos Mentioning Transformer

Deep Dive into LLMs like ChatGPT

Andrej Karpathy

Neural network architecture visualized and explained as the core model type for LLMs.

The 10,000x Yolo Researcher Metagame — with Yi Tay of Reka

Latent Space

Yi Tay's early research at Google brain focused on efficient Transformers, exploring alternatives to attention mechanisms and their broader applications.

LLM Asia Paper Club Survey Round

Latent Space

The underlying architecture for large language models, discussed in the context of how tokens are used for reasoning and how activations are processed.

![2024 in Post-Transformer Architectures: State Space Models, RWKV [Latent Space LIVE! @ NeurIPS 2024]](https://i.ytimg.com/vi/LPe6iC73lrc/maxresdefault.jpg)

2024 in Post-Transformer Architectures: State Space Models, RWKV [Latent Space LIVE! @ NeurIPS 2024]

Latent Space

The primary architecture discussed and contrasted with newer post-Transformer models, known for its quadratic scaling in attention.

Breaking down the OG GPT Paper by Alec Radford

Latent Space

The core architecture used in GPT, enabling efficient processing of sequential data and attention mechanisms.

![[Paper Club] Intro to Diffusion Models and OpenAI sCM: Simple, Stable, Scalable Consistency Models](https://i.ytimg.com/vi/epwgOz8mZMw/maxresdefault.jpg)

[Paper Club] Intro to Diffusion Models and OpenAI sCM: Simple, Stable, Scalable Consistency Models

Latent Space

Reference for positional embeddings, similar to Fourier embeddings used in the attention blocks of consistency models.

Yann LeCun: Dark Matter of Intelligence and Self-Supervised Learning | Lex Fridman Podcast #258

Lex Fridman

A neural network architecture, specifically mentioned for images where it represents images as non-overlapping patches for masking.

How DeepMind’s New AI Predicts What It Cannot See

Two Minute Papers

Language Agents: From Reasoning to Acting — with Shunyu Yao of OpenAI, Harrison Chase of LangGraph

Latent Space

A neural network architecture that has been influential in the development of large language models.

The Ultimate Guide to Prompting - with Sander Schulhoff from LearnPrompting.org

Latent Space

The underlying architecture of modern LLMs like GPT-3, mentioned in the context of role prompting potentially working better on older models than current Transformers.

Joscha Bach: Artificial Consciousness and the Nature of Reality | Lex Fridman Podcast #101

Lex Fridman

A type of neural network with attentional mechanisms, used in natural language processing to track identity and concepts within text.

The State of Silicon and the GPU Poors - with Dylan Patel of SemiAnalysis

Latent Space

The dominant architecture for LLMs, discussed as likely to continue reigning supreme.

FlashAttention-2: Making Transformers 800% faster AND exact

Latent Space

The foundational architecture for many modern NLP models, introduced in 2017, which popularized the attention mechanism.

Joscha Bach: Nature of Reality, Dreams, and Consciousness | Lex Fridman Podcast #212

Lex Fridman

An AI architecture, invented in 2017 for natural language processing, that GPT-3 and its successors use, enabling them to learn relationships between distant words in a large context.

Ishan Misra: Self-Supervised Deep Learning in Computer Vision | Lex Fridman Podcast #206

Lex Fridman

Discussed as an architecture for AI models, especially in natural language understanding, similar to those used by OpenAI.

Ilya Sutskever: Deep Learning | Lex Fridman Podcast #94

Lex Fridman

A neural network architecture that makes extensive use of attention mechanisms, becoming a foundational element for breakthroughs in natural language processing due to its efficiency on GPUs and non-recurrent nature.

Dmitri Dolgov: Waymo and the Future of Self-Driving Cars | Lex Fridman Podcast #147

Lex Fridman

A type of neural network architecture, noted for its breakthroughs in language models (like GPT-3), and its potential applicability to behavior prediction and decision-making in autonomous driving.

Chris Lattner: The Future of Computing and Programming Languages | Lex Fridman Podcast #131

Lex Fridman

A neural network architecture that GPT models are based on, characterized by its non-recurrent nature and simplicity, yet highly effective for learning language models.

Aravind Srinivas: Perplexity CEO on Future of AI, Search & the Internet | Lex Fridman Podcast #434

Lex Fridman

Dileep George: Brain-Inspired AI | Lex Fridman Podcast #115

Lex Fridman

A novel neural network architecture used in deep learning, particularly for natural language processing, which GPT-3 is based on.

Prelude To Power: 1931 Michael Faraday Celebration

Ri Archives

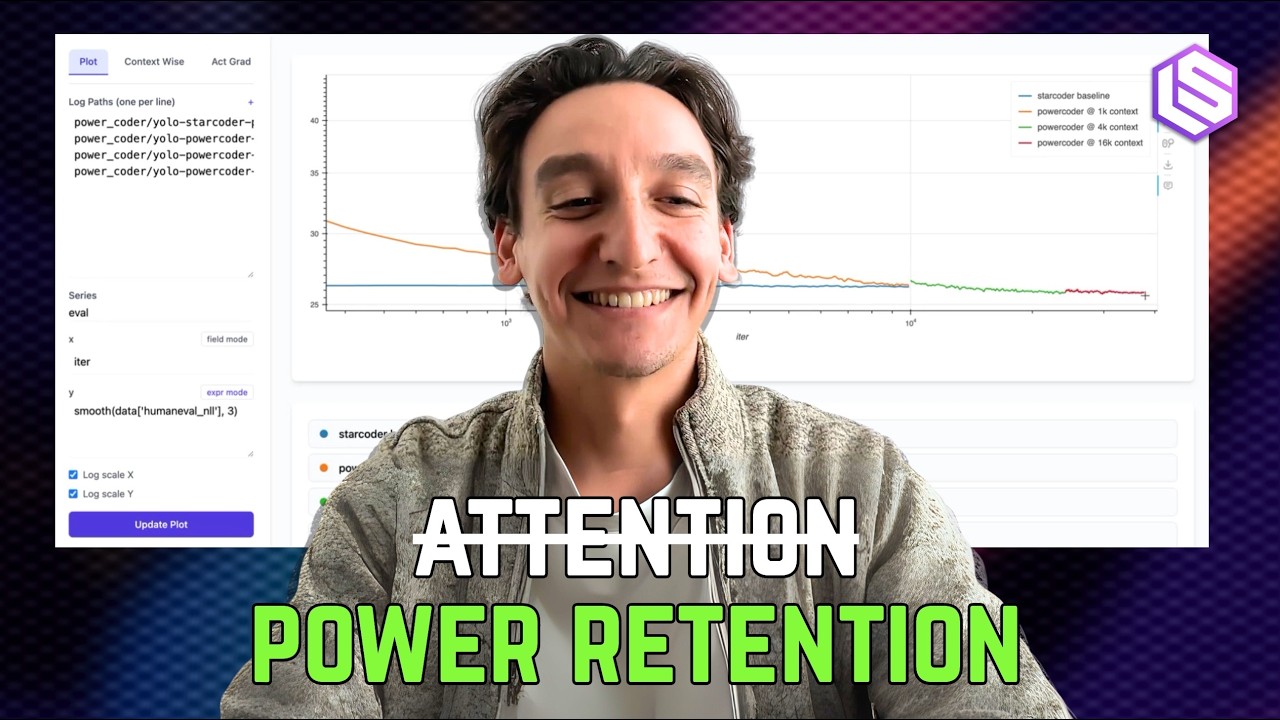

⚡️ Beyond Transformers with Power Retention

Latent Space

The foundational architecture that has dominated LLMs; its history and evolution are compared to the potential adoption of power retention.

Sergey Brin | All-In Summit 2024

All-In Podcast

The foundational paper for language models, invented by Google researchers, which Brin believes they were too timid to deploy initially.

Oriol Vinyals: Deep Learning and Artificial General Intelligence | Lex Fridman Podcast #306

Lex Fridman

A neural network architecture that utilizes attention mechanisms, considered a powerful and stable approach for sequence modeling across various modalities.